Search Results for author: Quanyu Liao

Found 5 papers, 0 papers with code

Attacking Important Pixels for Anchor-free Detectors

no code implementations • 26 Jan 2023 • Yunxu Xie, Shu Hu, Xin Wang, Quanyu Liao, Bin Zhu, Xi Wu, Siwei Lyu

Existing adversarial attacks on object detection focus on attacking anchor-based detectors, which may not work well for anchor-free detectors.

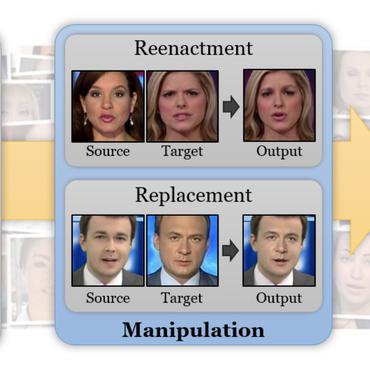

Imperceptible Adversarial Examples for Fake Image Detection

no code implementations • 3 Jun 2021 • Quanyu Liao, Yuezun Li, Xin Wang, Bin Kong, Bin Zhu, Siwei Lyu, Youbing Yin, Qi Song, Xi Wu

Fooling people with highly realistic fake images generated with Deepfake or GANs brings a great social disturbance to our society.

Transferable Adversarial Examples for Anchor Free Object Detection

no code implementations • 3 Jun 2021 • Quanyu Liao, Xin Wang, Bin Kong, Siwei Lyu, Bin Zhu, Youbing Yin, Qi Song, Xi Wu

Deep neural networks have been demonstrated to be vulnerable to adversarial attacks: subtle perturbation can completely change prediction result.

Fast Local Attack: Generating Local Adversarial Examples for Object Detectors

no code implementations • 27 Oct 2020 • Quanyu Liao, Xin Wang, Bin Kong, Siwei Lyu, Youbing Yin, Qi Song, Xi Wu

The deep neural network is vulnerable to adversarial examples.

Category-wise Attack: Transferable Adversarial Examples for Anchor Free Object Detection

no code implementations • 10 Feb 2020 • Quanyu Liao, Xin Wang, Bin Kong, Siwei Lyu, Youbing Yin, Qi Song, Xi Wu

Deep neural networks have been demonstrated to be vulnerable to adversarial attacks: subtle perturbations can completely change the classification results.