Learning Robust Global Representations by Penalizing Local Predictive Power

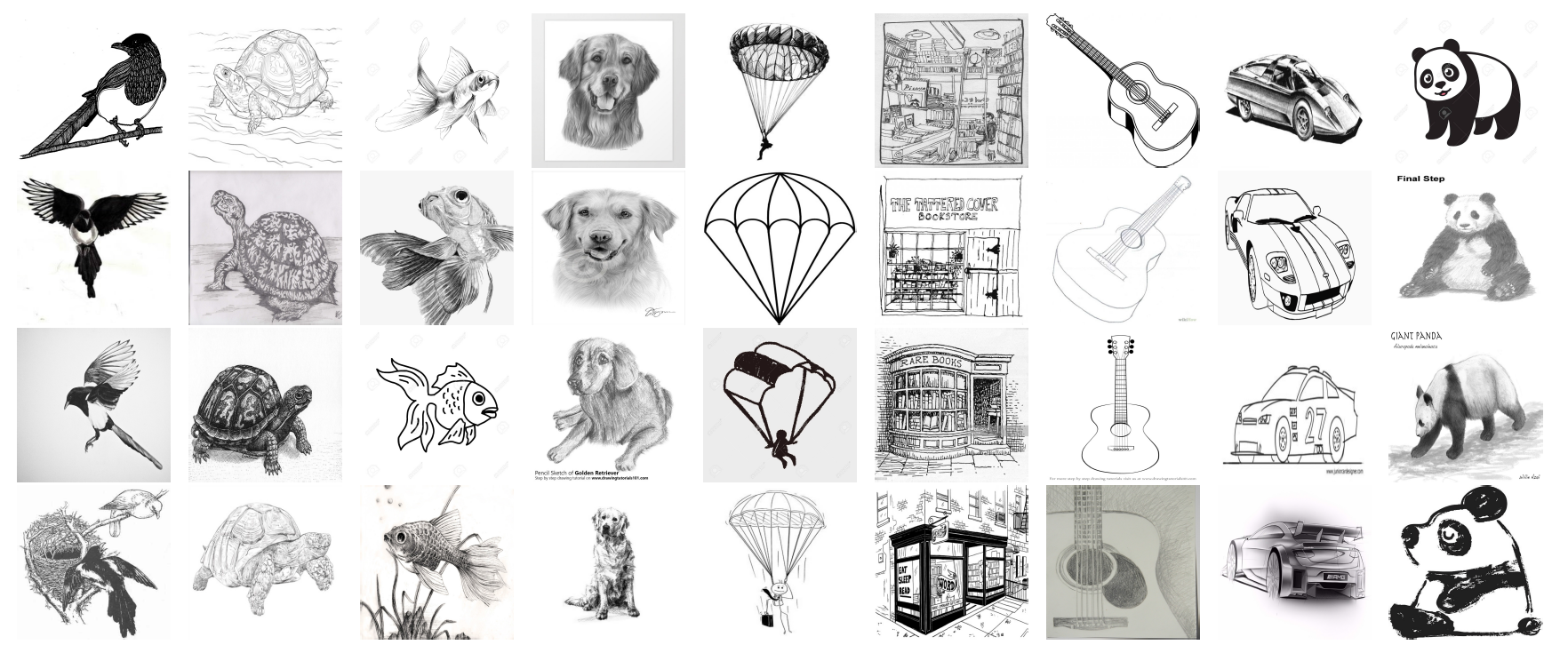

Despite their renowned predictive power on i.i.d. data, convolutional neural networks are known to rely more on high-frequency patterns that humans deem superficial than on low-frequency patterns that agree better with intuitions about what constitutes category membership. This paper proposes a method for training robust convolutional networks by penalizing the predictive power of the local representations learned by earlier layers. Intuitively, our networks are forced to discard predictive signals such as color and texture that can be gleaned from local receptive fields and to rely instead on the global structures of the image. Across a battery of synthetic and benchmark domain adaptation tasks, our method confers improved generalization out of the domain. Also, to evaluate cross-domain transfer, we introduce ImageNet-Sketch, a new dataset consisting of sketch-like images, that matches the ImageNet classification validation set in categories and scale.

PDF Abstract NeurIPS 2019 PDF NeurIPS 2019 Abstract

ImageNet-Sketch

ImageNet-Sketch

ImageNet

ImageNet

PACS

PACS