Bilateral Multi-Perspective Matching for Natural Language Sentences

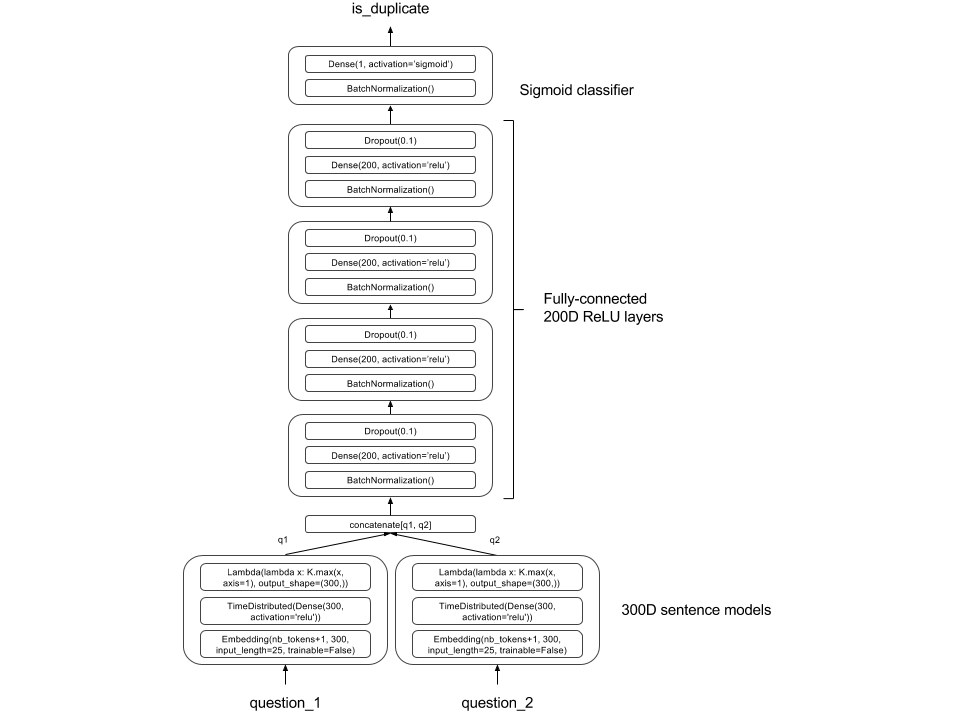

Natural language sentence matching is a fundamental technology for a variety of tasks. Previous approaches either match sentences from a single direction or only apply single granular (word-by-word or sentence-by-sentence) matching. In this work, we propose a bilateral multi-perspective matching (BiMPM) model under the "matching-aggregation" framework. Given two sentences $P$ and $Q$, our model first encodes them with a BiLSTM encoder. Next, we match the two encoded sentences in two directions $P \rightarrow Q$ and $P \leftarrow Q$. In each matching direction, each time step of one sentence is matched against all time-steps of the other sentence from multiple perspectives. Then, another BiLSTM layer is utilized to aggregate the matching results into a fix-length matching vector. Finally, based on the matching vector, the decision is made through a fully connected layer. We evaluate our model on three tasks: paraphrase identification, natural language inference and answer sentence selection. Experimental results on standard benchmark datasets show that our model achieves the state-of-the-art performance on all tasks.

PDF AbstractCode

Datasets

Results from the Paper

Ranked #17 on

Paraphrase Identification

on Quora Question Pairs

(Accuracy metric)

Ranked #17 on

Paraphrase Identification

on Quora Question Pairs

(Accuracy metric)

SNLI

SNLI

WikiQA

WikiQA

Quora

Quora

Quora Question Pairs

Quora Question Pairs