Model Zoo: A Growing "Brain" That Learns Continually

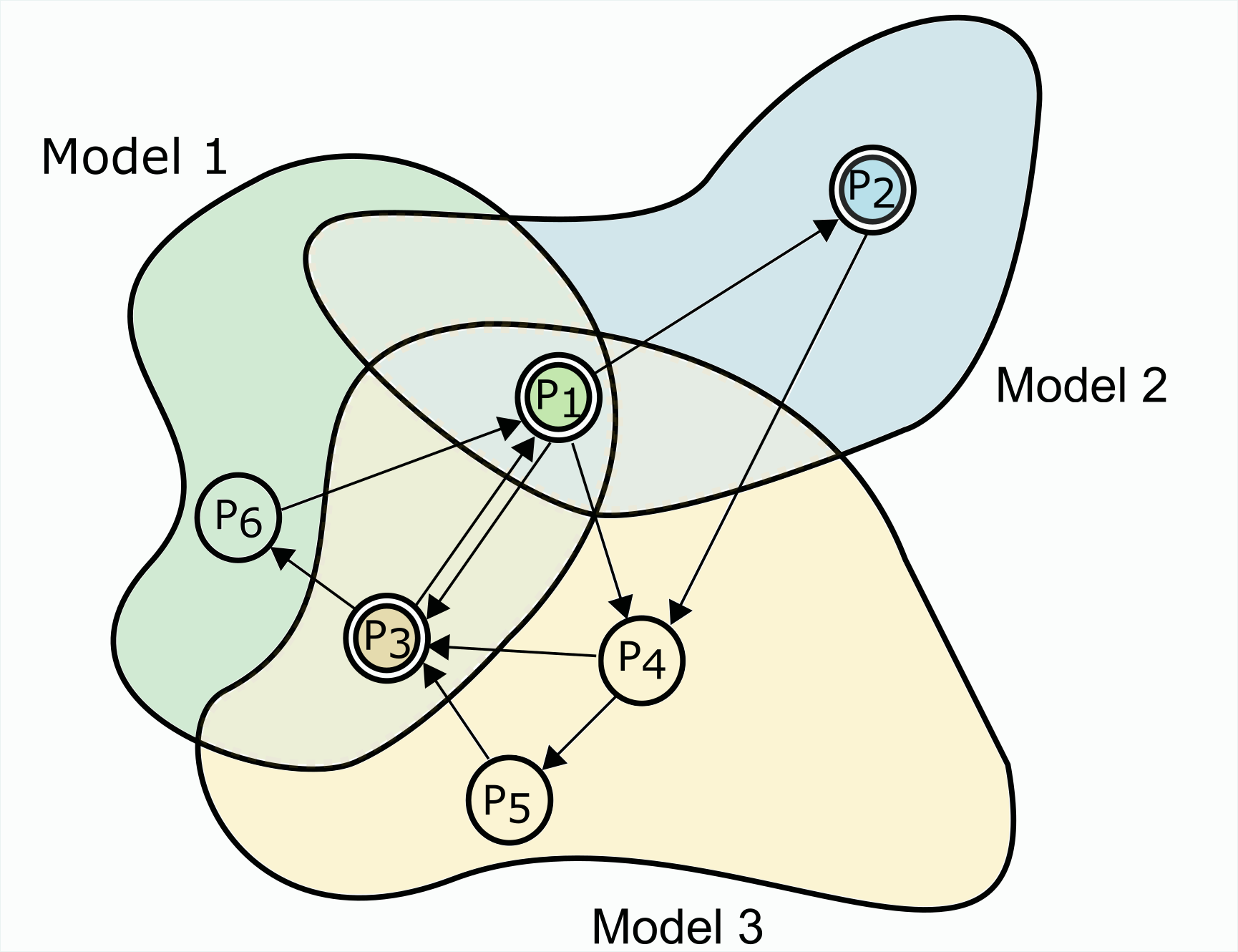

This paper argues that continual learning methods can benefit by splitting the capacity of the learner across multiple models. We use statistical learning theory and experimental analysis to show how multiple tasks can interact with each other in a non-trivial fashion when a single model is trained on them. The generalization error on a particular task can improve when it is trained with synergistic tasks, but can also deteriorate when trained with competing tasks. This theory motivates our method named Model Zoo which, inspired from the boosting literature, grows an ensemble of small models, each of which is trained during one episode of continual learning. We demonstrate that Model Zoo obtains large gains in accuracy on a variety of continual learning benchmark problems. Code is available at https://github.com/grasp-lyrl/modelzoo_continual.

PDF AbstractDatasets

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Continual Learning | Cifar100 (20 tasks) | Model Zoo-Continual | Average Accuracy | 94.99 | # 1 | |

| Continual Learning | Coarse-CIFAR100 | Model Zoo-Continual | Average Accuracy | 84.27 | # 1 | |

| Continual Learning | Permuted MNIST | Model Zoo-Continual | Average Accuracy | 97.71 | # 2 | |

| Continual Learning | Rotated MNIST | Model Zoo-Continual | Average Accuracy | 99.66 | # 1 |

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100

MNIST

MNIST

Permuted MNIST

Permuted MNIST