Compute and memory efficient universal sound source separation

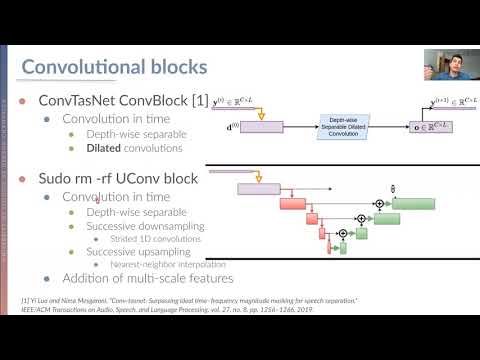

Recent progress in audio source separation lead by deep learning has enabled many neural network models to provide robust solutions to this fundamental estimation problem. In this study, we provide a family of efficient neural network architectures for general purpose audio source separation while focusing on multiple computational aspects that hinder the application of neural networks in real-world scenarios. The backbone structure of this convolutional network is the SUccessive DOwnsampling and Resampling of Multi-Resolution Features (SuDoRM-RF) as well as their aggregation which is performed through simple one-dimensional convolutions. This mechanism enables our models to obtain high fidelity signal separation in a wide variety of settings where variable number of sources are present and with limited computational resources (e.g. floating point operations, memory footprint, number of parameters and latency). Our experiments show that SuDoRM-RF models perform comparably and even surpass several state-of-the-art benchmarks with significantly higher computational resource requirements. The causal variation of SuDoRM-RF is able to obtain competitive performance in real-time speech separation of around 10dB scale-invariant signal-to-distortion ratio improvement (SI-SDRi) while remaining up to 20 times faster than real-time on a laptop device.

PDF Abstract

ESC-50

ESC-50

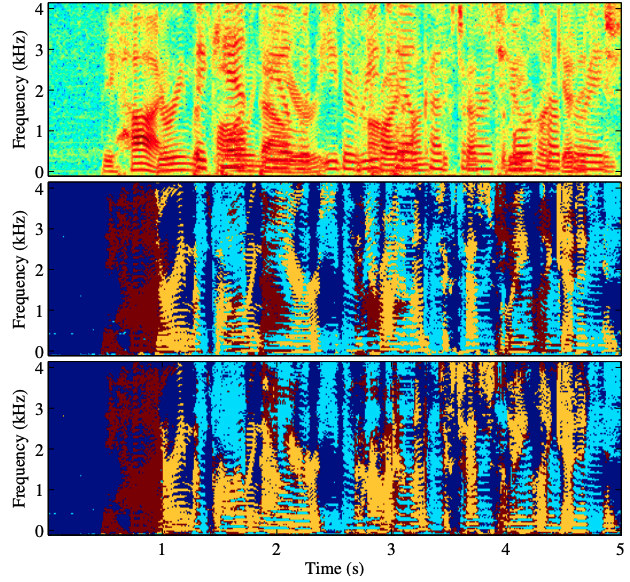

WSJ0-2mix

WSJ0-2mix