Follow the Neurally-Perturbed Leader for Adversarial Training

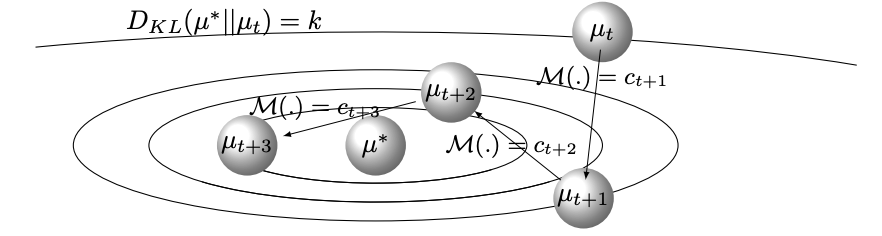

Game-theoretic models of learning are a powerful set of models that optimize multi-objective architectures. Among these models are zero-sum architectures that have inspired adversarial learning frameworks. An important shortcoming of these zeros-sum architectures is that gradient-based training leads to weak convergence and cyclic dynamics. We propose a novel follow the leader training algorithm for zeros-sum architectures that guarantees convergence to mixed Nash equilibrium without cyclic behaviors. It is a special type of follow the perturbed leader algorithm where perturbations are the result of a neural mediating agent. We validate our theoretical results by applying this training algorithm to games with convex and non-convex loss as well as generative adversarial architectures. Moreover, we customize the implementation of this algorithm for adversarial imitation learning applications. At every step of the training, the mediator agent perturbs the observations with generated codes. As a result of these mediating codes, the proposed algorithm is also efficient for learning in environments with various factors of variations. We validate our assertion by using a procedurally generated game environment as well as synthetic data. Github implementation is available.

PDF Abstract