Differentiable Particle Filters: End-to-End Learning with Algorithmic Priors

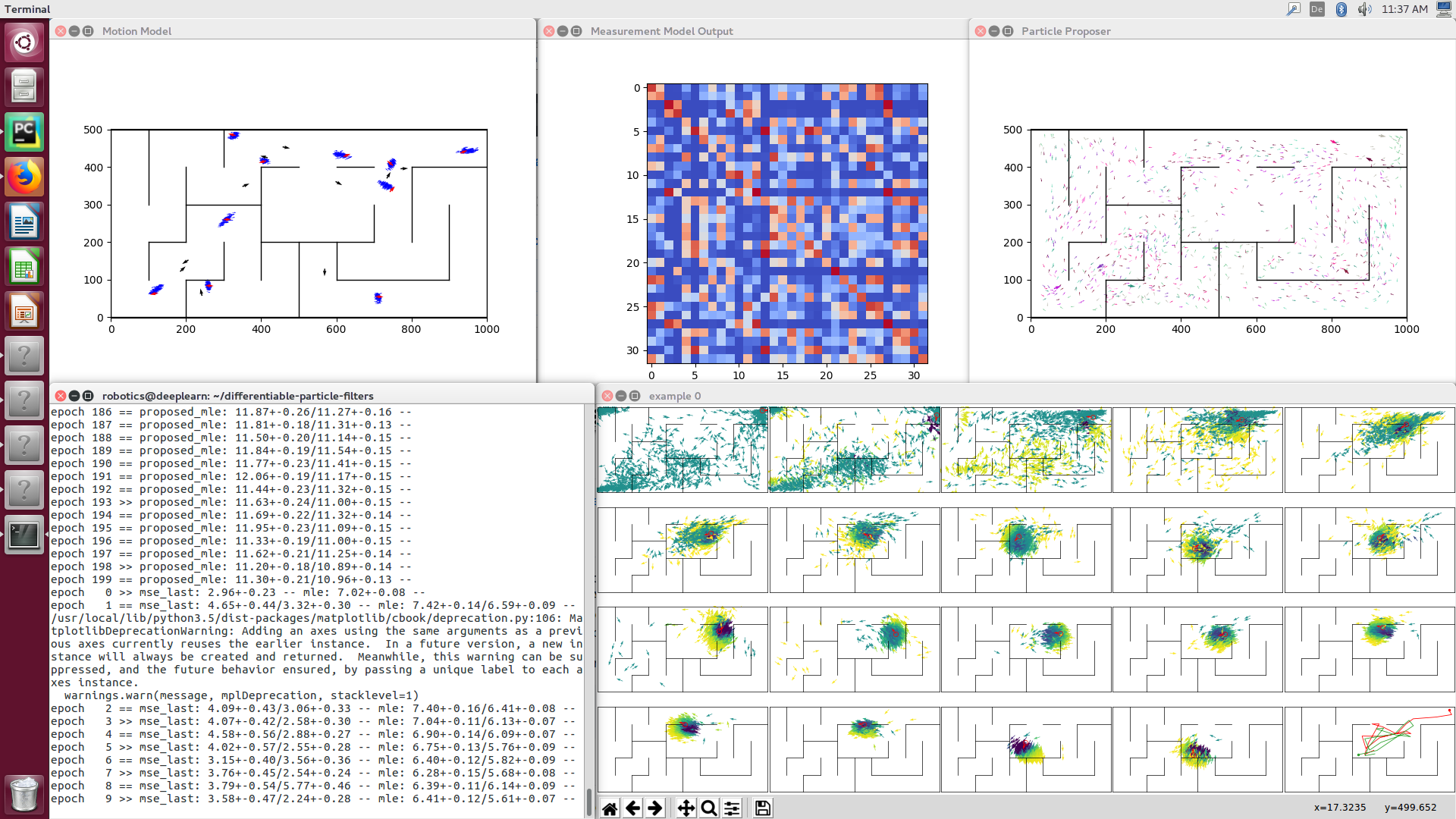

We present differentiable particle filters (DPFs): a differentiable implementation of the particle filter algorithm with learnable motion and measurement models. Since DPFs are end-to-end differentiable, we can efficiently train their models by optimizing end-to-end state estimation performance, rather than proxy objectives such as model accuracy. DPFs encode the structure of recursive state estimation with prediction and measurement update that operate on a probability distribution over states. This structure represents an algorithmic prior that improves learning performance in state estimation problems while enabling explainability of the learned model. Our experiments on simulated and real data show substantial benefits from end-to- end learning with algorithmic priors, e.g. reducing error rates by ~80%. Our experiments also show that, unlike long short-term memory networks, DPFs learn localization in a policy-agnostic way and thus greatly improve generalization. Source code is available at https://github.com/tu-rbo/differentiable-particle-filters .

PDF Abstract