DyRep: Bootstrapping Training with Dynamic Re-parameterization

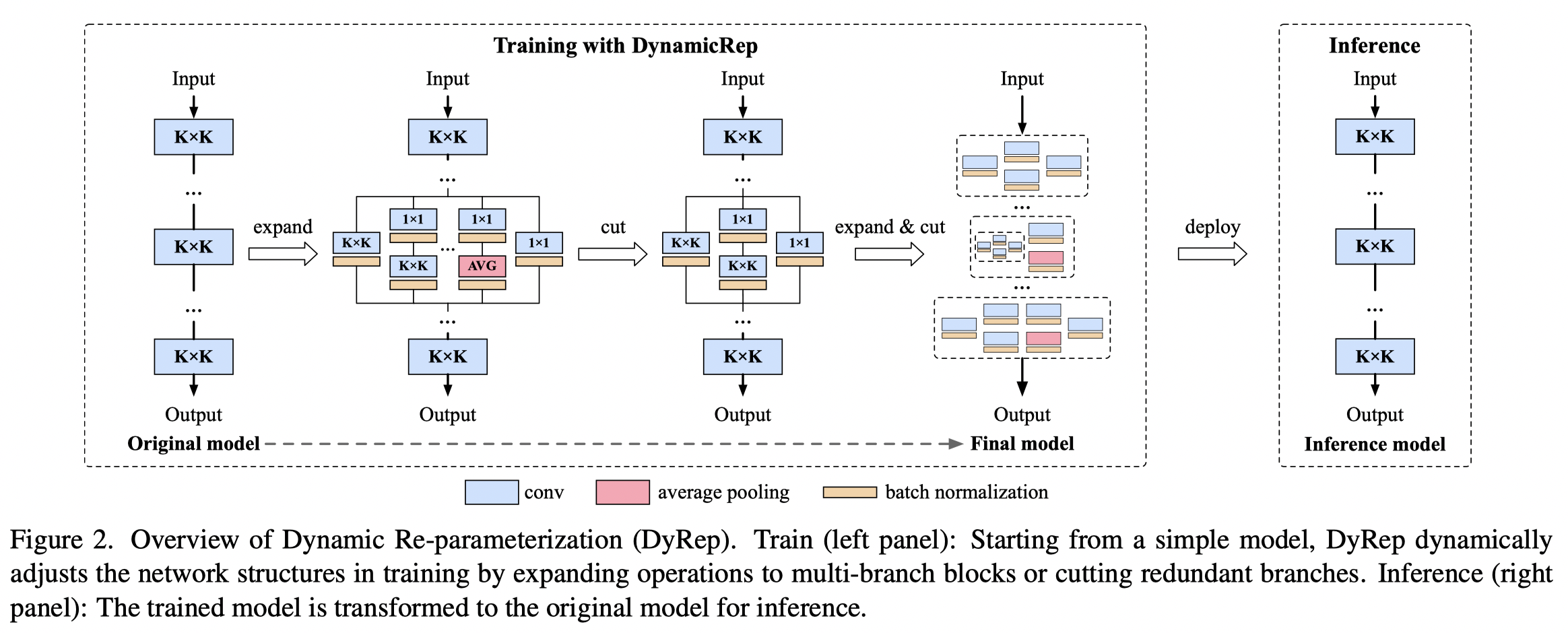

Structural re-parameterization (Rep) methods achieve noticeable improvements on simple VGG-style networks. Despite the prevalence, current Rep methods simply re-parameterize all operations into an augmented network, including those that rarely contribute to the model's performance. As such, the price to pay is an expensive computational overhead to manipulate these unnecessary behaviors. To eliminate the above caveats, we aim to bootstrap the training with minimal cost by devising a dynamic re-parameterization (DyRep) method, which encodes Rep technique into the training process that dynamically evolves the network structures. Concretely, our proposal adaptively finds the operations which contribute most to the loss in the network, and applies Rep to enhance their representational capacity. Besides, to suppress the noisy and redundant operations introduced by Rep, we devise a de-parameterization technique for a more compact re-parameterization. With this regard, DyRep is more efficient than Rep since it smoothly evolves the given network instead of constructing an over-parameterized network. Experimental results demonstrate our effectiveness, e.g., DyRep improves the accuracy of ResNet-18 by $2.04\%$ on ImageNet and reduces $22\%$ runtime over the baseline. Code is available at: https://github.com/hunto/DyRep.

PDF Abstract CVPR 2022 PDF CVPR 2022 Abstract