Efficient parametrization of multi-domain deep neural networks

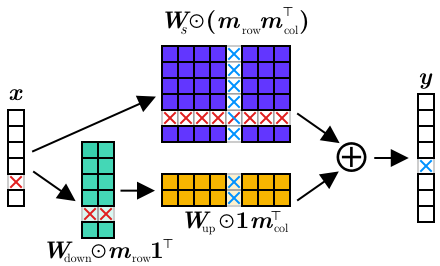

A practical limitation of deep neural networks is their high degree of specialization to a single task and visual domain. Recently, inspired by the successes of transfer learning, several authors have proposed to learn instead universal, fixed feature extractors that, used as the first stage of any deep network, work well for several tasks and domains simultaneously. Nevertheless, such universal features are still somewhat inferior to specialized networks. To overcome this limitation, in this paper we propose to consider instead universal parametric families of neural networks, which still contain specialized problem-specific models, but differing only by a small number of parameters. We study different designs for such parametrizations, including series and parallel residual adapters, joint adapter compression, and parameter allocations, and empirically identify the ones that yield the highest compression. We show that, in order to maximize performance, it is necessary to adapt both shallow and deep layers of a deep network, but the required changes are very small. We also show that these universal parametrization are very effective for transfer learning, where they outperform traditional fine-tuning techniques.

PDF Abstract CVPR 2018 PDF CVPR 2018 AbstractCode

Datasets

Results from the Paper

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Continual Learning | visual domain decathlon (10 tasks) | Parallel Res. adapt. | decathlon discipline (Score) | 3412 | # 3 | |

| Avg. Accuracy | 78.07 | # 2 | ||||

| Continual Learning | visual domain decathlon (10 tasks) | Series Res. adapt. | decathlon discipline (Score) | 3159 | # 5 |

CIFAR-100

CIFAR-100