Elucidating the Exposure Bias in Diffusion Models

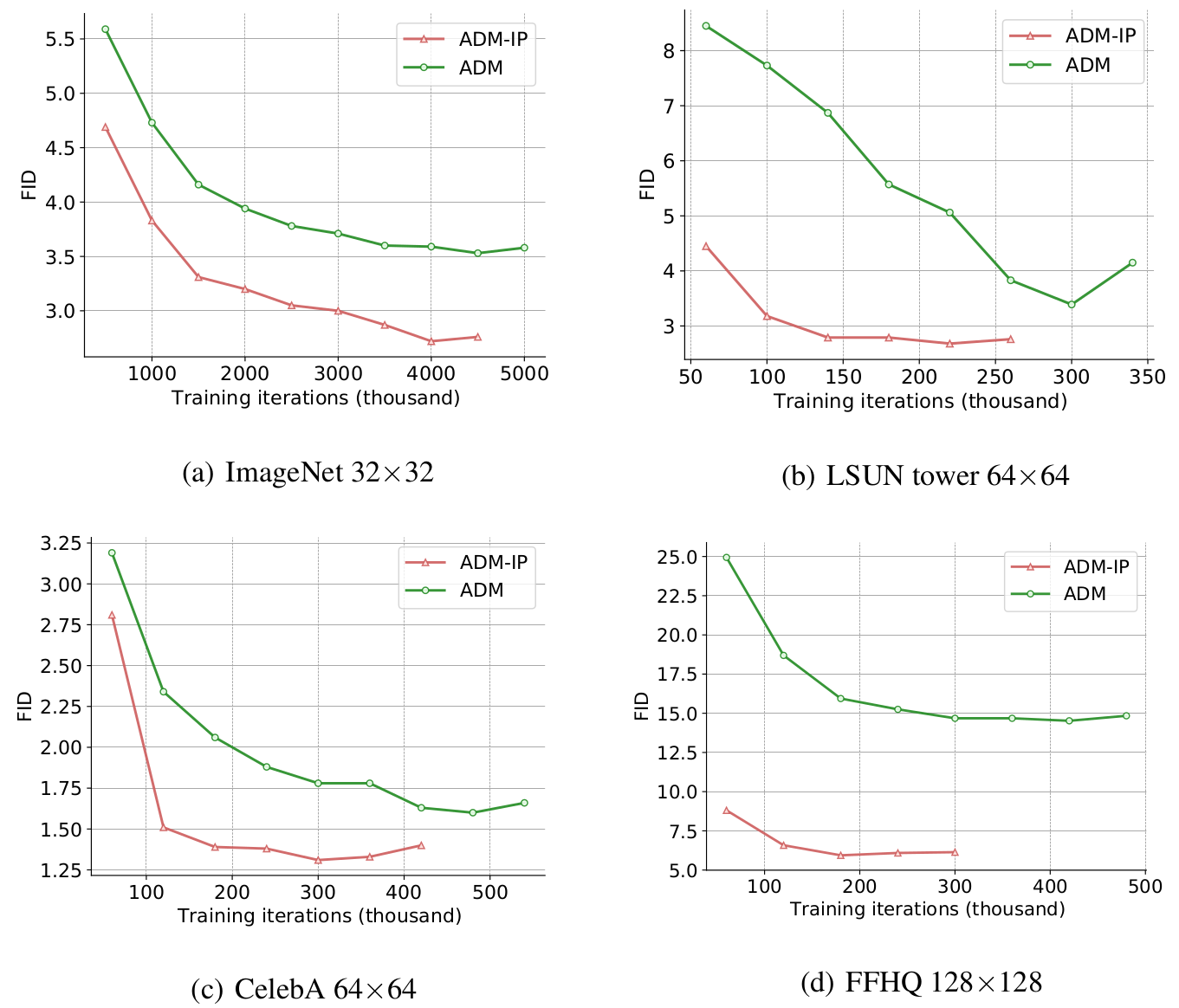

Diffusion models have demonstrated impressive generative capabilities, but their \textit{exposure bias} problem, described as the input mismatch between training and sampling, lacks in-depth exploration. In this paper, we systematically investigate the exposure bias problem in diffusion models by first analytically modelling the sampling distribution, based on which we then attribute the prediction error at each sampling step as the root cause of the exposure bias issue. Furthermore, we discuss potential solutions to this issue and propose an intuitive metric for it. Along with the elucidation of exposure bias, we propose a simple, yet effective, training-free method called Epsilon Scaling to alleviate the exposure bias. We show that Epsilon Scaling explicitly moves the sampling trajectory closer to the vector field learned in the training phase by scaling down the network output, mitigating the input mismatch between training and sampling. Experiments on various diffusion frameworks (ADM, DDIM, EDM, LDM, DiT, PFGM++) verify the effectiveness of our method. Remarkably, our ADM-ES, as a state-of-the-art stochastic sampler, obtains 2.17 FID on CIFAR-10 under 100-step unconditional generation. The code is available at \url{https://github.com/forever208/ADM-ES} and \url{https://github.com/forever208/EDM-ES}.

PDF Abstract

CIFAR-10

CIFAR-10

CelebA

CelebA

FFHQ

FFHQ

LSUN

LSUN

ImageNet-64

ImageNet-64