ESceme: Vision-and-Language Navigation with Episodic Scene Memory

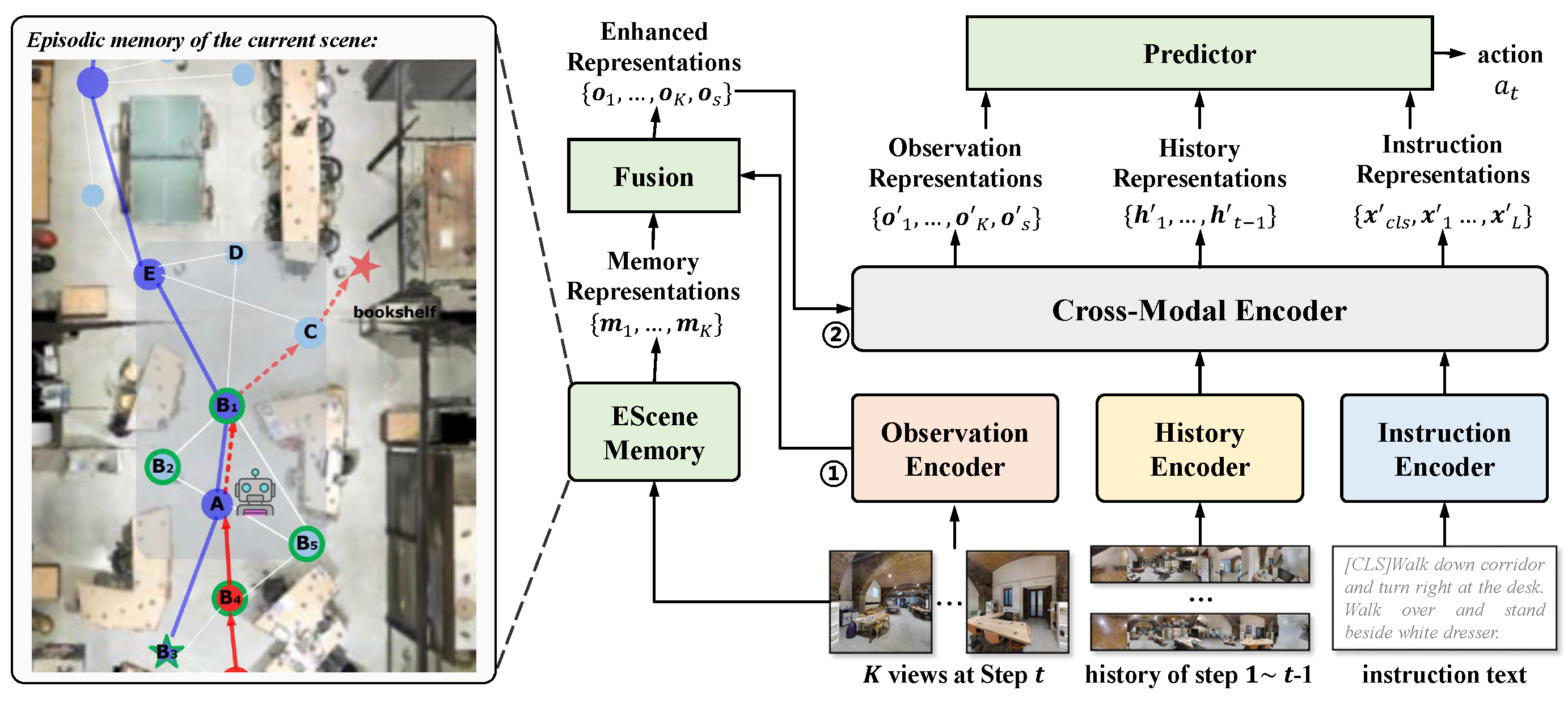

Vision-and-language navigation (VLN) simulates a visual agent that follows natural-language navigation instructions in real-world scenes. Existing approaches have made enormous progress in navigation in new environments, such as beam search, pre-exploration, and dynamic or hierarchical history encoding. To balance generalization and efficiency, we resort to memorizing visited scenarios apart from the ongoing route while navigating. In this work, we introduce a mechanism of Episodic Scene memory (ESceme) for VLN that wakes an agent's memories of past visits when it enters the current scene. The episodic scene memory allows the agent to envision a bigger picture of the next prediction. This way, the agent learns to utilize dynamically updated information instead of merely adapting to static observations. We provide a simple yet effective implementation of ESceme by enhancing the accessible views at each location and progressively completing the memory while navigating. We verify the superiority of ESceme on short-horizon (R2R), long-horizon (R4R), and vision-and-dialog (CVDN) VLN tasks. Our ESceme also wins first place on the CVDN leaderboard. Code is available: \url{https://github.com/qizhust/esceme}.}

PDF Abstract

R2R

R2R