Fast End-to-End Trainable Guided Filter

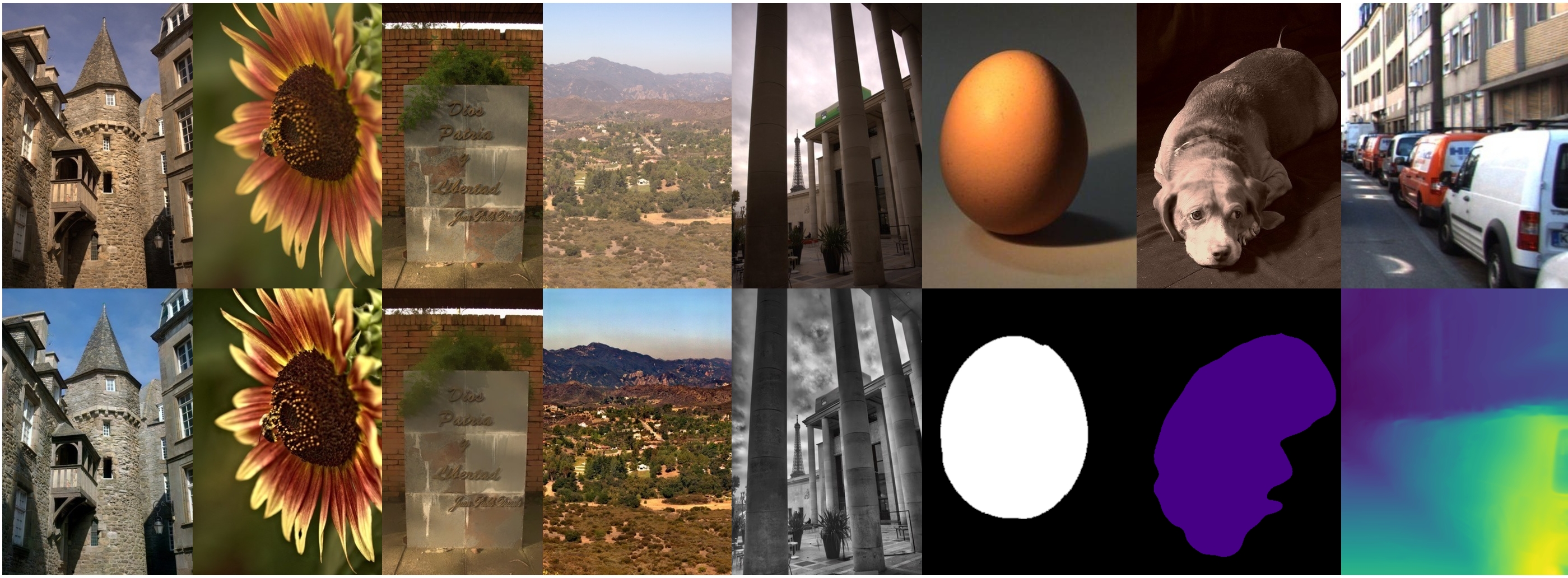

Dense pixel-wise image prediction has been advanced by harnessing the capabilities of Fully Convolutional Networks (FCNs). One central issue of FCNs is the limited capacity to handle joint upsampling. To address the problem, we present a novel building block for FCNs, namely guided filtering layer, which is designed for efficiently generating a high-resolution output given the corresponding low-resolution one and a high-resolution guidance map. Such a layer contains learnable parameters, which can be integrated with FCNs and jointly optimized through end-to-end training. To further take advantage of end-to-end training, we plug in a trainable transformation function for generating the task-specific guidance map. Based on the proposed layer, we present a general framework for pixel-wise image prediction, named deep guided filtering network (DGF). The proposed network is evaluated on five image processing tasks. Experiments on MIT-Adobe FiveK Dataset demonstrate that DGF runs 10-100 times faster and achieves the state-of-the-art performance. We also show that DGF helps to improve the performance of multiple computer vision tasks.

PDF Abstract CVPR 2018 PDF CVPR 2018 Abstract