FewJoint: A Few-shot Learning Benchmark for Joint Language Understanding

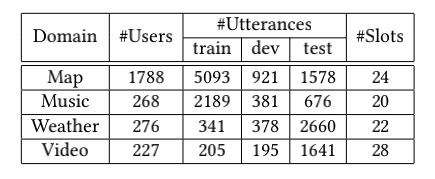

Few-shot learning (FSL) is one of the key future steps in machine learning and has raised a lot of attention. However, in contrast to the rapid development in other domains, such as Computer Vision, the progress of FSL in Nature Language Processing (NLP) is much slower. One of the key reasons for this is the lacking of public benchmarks. NLP FSL researches always report new results on their own constructed few-shot datasets, which is pretty inefficient in results comparison and thus impedes cumulative progress. In this paper, we present FewJoint, a novel Few-Shot Learning benchmark for NLP. Different from most NLP FSL research that only focus on simple N-classification problems, our benchmark introduces few-shot joint dialogue language understanding, which additionally covers the structure prediction and multi-task reliance problems. This allows our benchmark to reflect the real-word NLP complexity beyond simple N-classification. Our benchmark is used in the few-shot learning contest of SMP2020-ECDT task-1. We also provide a compatible FSL platform to ease experiment set-up.

PDF Abstract