Improving Deep Representation Learning via Auxiliary Learnable Target Coding

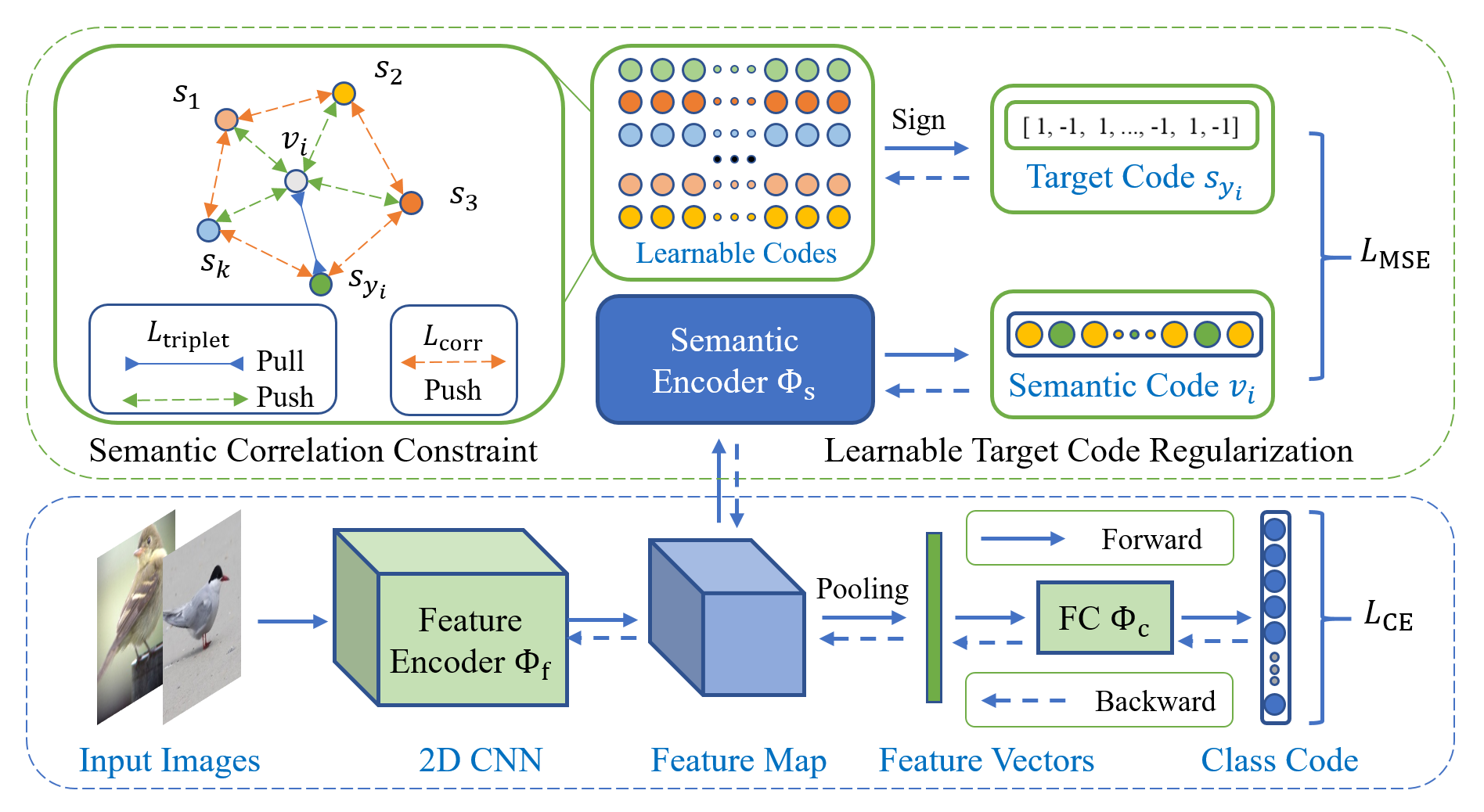

Deep representation learning is a subfield of machine learning that focuses on learning meaningful and useful representations of data through deep neural networks. However, existing methods for semantic classification typically employ pre-defined target codes such as the one-hot and the Hadamard codes, which can either fail or be less flexible to model inter-class correlation. In light of this, this paper introduces a novel learnable target coding as an auxiliary regularization of deep representation learning, which can not only incorporate latent dependency across classes but also impose geometric properties of target codes into representation space. Specifically, a margin-based triplet loss and a correlation consistency loss on the proposed target codes are designed to encourage more discriminative representations owing to enlarging between-class margins in representation space and favoring equal semantic correlation of learnable target codes respectively. Experimental results on several popular visual classification and retrieval benchmarks can demonstrate the effectiveness of our method on improving representation learning, especially for imbalanced data.

PDF Abstract

ImageNet

ImageNet

CIFAR-100

CIFAR-100

CUB-200-2011

CUB-200-2011

iNaturalist

iNaturalist