Information Removal at the bottleneck in Deep Neural Networks

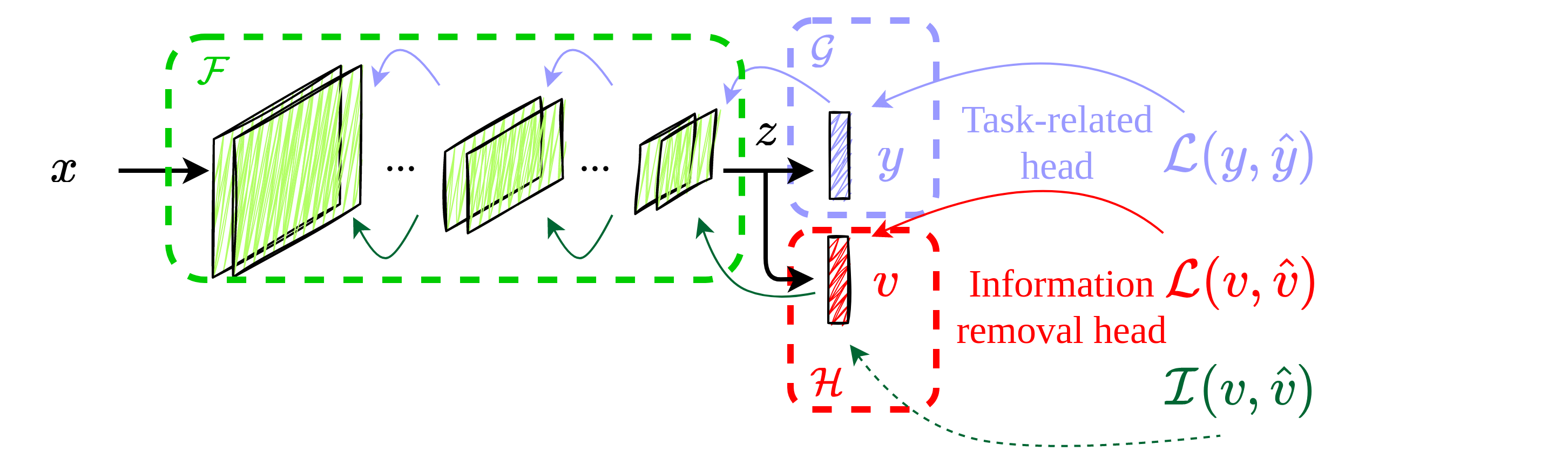

Deep learning models are nowadays broadly deployed to solve an incredibly large variety of tasks. Commonly, leveraging over the availability of "big data", deep neural networks are trained as black-boxes, minimizing an objective function at its output. This however does not allow control over the propagation of some specific features through the model, like gender or race, for solving some an uncorrelated task. This raises issues either in the privacy domain (considering the propagation of unwanted information) and of bias (considering that these features are potentially used to solve the given task). In this work we propose IRENE, a method to achieve information removal at the bottleneck of deep neural networks, which explicitly minimizes the estimated mutual information between the features to be kept ``private'' and the target. Experiments on a synthetic dataset and on CelebA validate the effectiveness of the proposed approach, and open the road towards the development of approaches guaranteeing information removal in deep neural networks.

PDF Abstract

CelebA

CelebA