Interpreting and Disentangling Feature Components of Various Complexity from DNNs

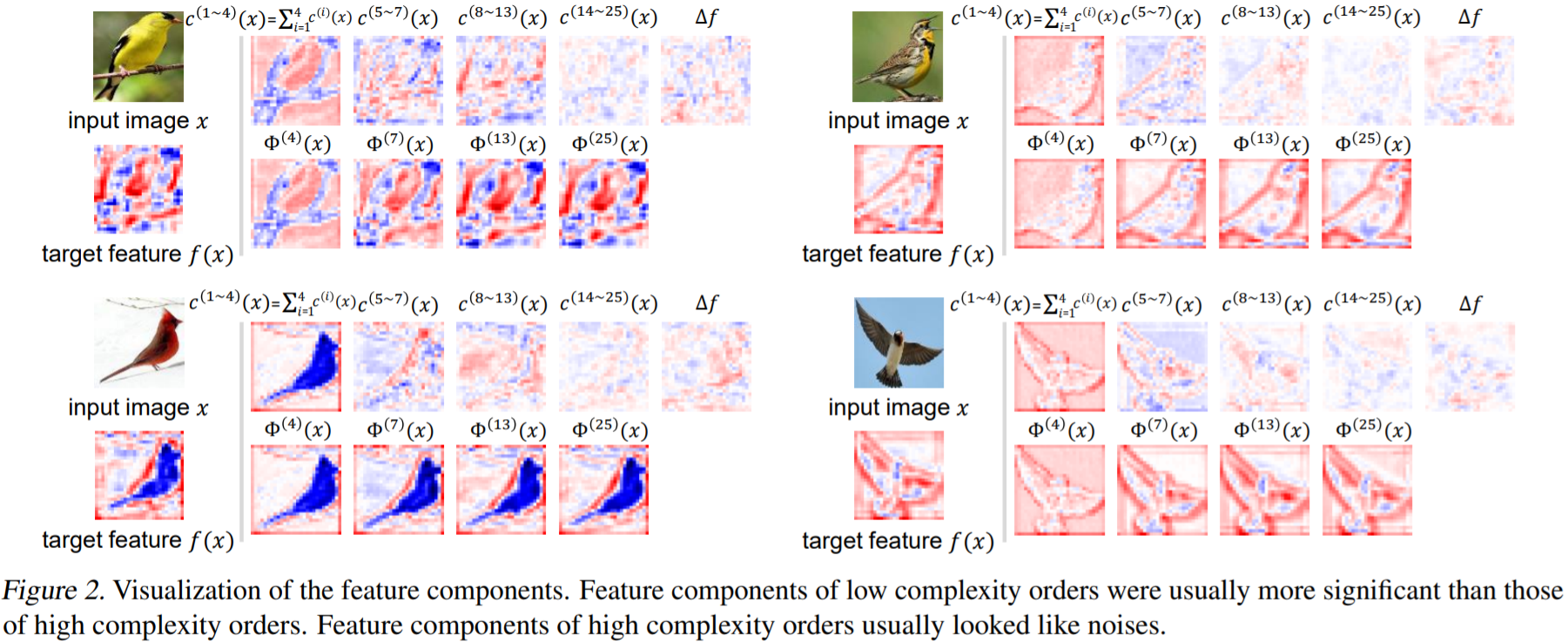

This paper aims to define, quantify, and analyze the feature complexity that is learned by a DNN. We propose a generic definition for the feature complexity. Given the feature of a certain layer in the DNN, our method disentangles feature components of different complexity orders from the feature. We further design a set of metrics to evaluate the reliability, the effectiveness, and the significance of over-fitting of these feature components. Furthermore, we successfully discover a close relationship between the feature complexity and the performance of DNNs. As a generic mathematical tool, the feature complexity and the proposed metrics can also be used to analyze the success of network compression and knowledge distillation.

PDF Abstract

CIFAR-10

CIFAR-10

CUB-200-2011

CUB-200-2011