Leveraging Locality in Abstractive Text Summarization

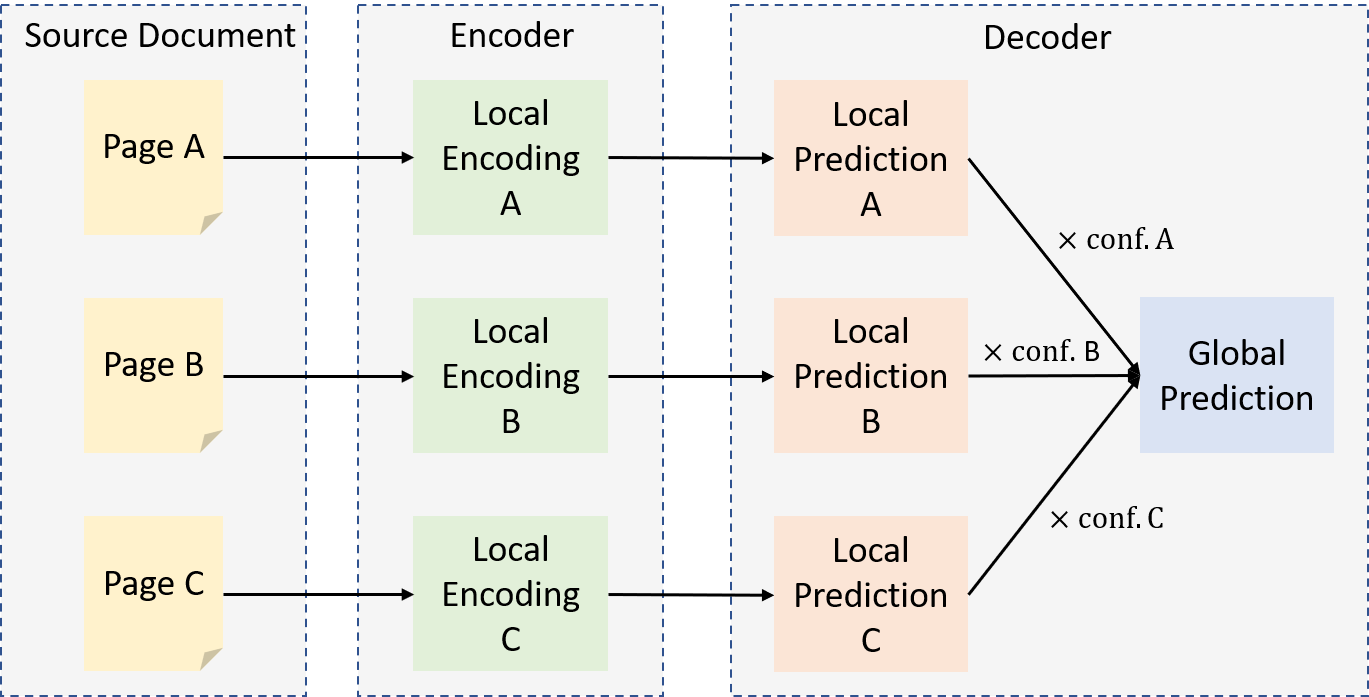

Neural attention models have achieved significant improvements on many natural language processing tasks. However, the quadratic memory complexity of the self-attention module with respect to the input length hinders their applications in long text summarization. Instead of designing more efficient attention modules, we approach this problem by investigating if models with a restricted context can have competitive performance compared with the memory-efficient attention models that maintain a global context by treating the input as a single sequence. Our model is applied to individual pages which contain parts of inputs grouped by the principle of locality during both encoding and decoding. We empirically investigated three kinds of locality in text summarization at different levels of granularity, ranging from sentences to documents. Our experimental results show that our model has a better performance compared with strong baselines with efficient attention modules, and our analysis provides further insights into our locality-aware modeling strategy.

PDF Abstract

CNN/Daily Mail

CNN/Daily Mail

Multi-News

Multi-News