Mix-Pooling Strategy for Attention Mechanism

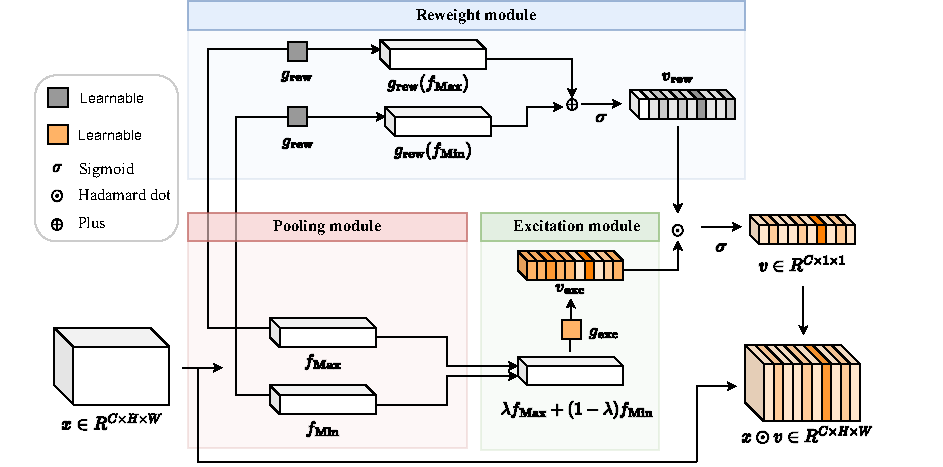

Recently many effective attention modules are proposed to boot the model performance by exploiting the internal information of convolutional neural networks in computer vision. In general, many previous works ignore considering the design of the pooling strategy of the attention mechanism since they adopt the global average pooling for granted, which hinders the further improvement of the performance of the attention mechanism. However, we empirically find and verify a phenomenon that the simple linear combination of global max-pooling and global min-pooling can produce pooling strategies that match or exceed the performance of global average pooling. Based on this empirical observation, we propose a simple-yet-effective attention module SPEM, which adopts a self-adaptive pooling strategy based on global max-pooling and global min-pooling and a lightweight module for producing the attention map. The effectiveness of SPEM is demonstrated by extensive experiments on widely-used benchmark datasets and popular attention networks.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100