Multi-Agent Reinforcement Learning for Unmanned Aerial Vehicle Coordination by Multi-Critic Policy Gradient Optimization

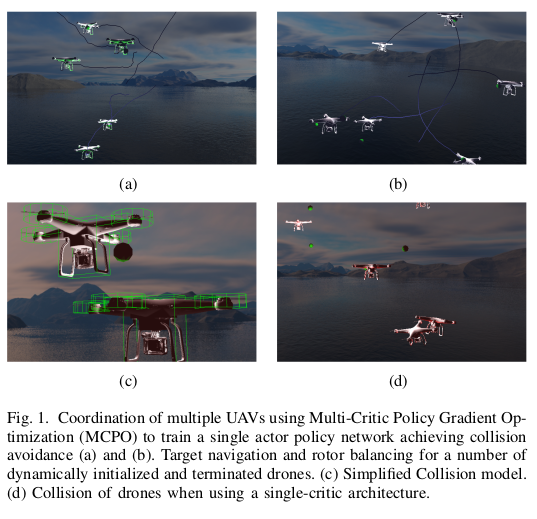

Recent technological progress in the development of Unmanned Aerial Vehicles (UAVs) together with decreasing acquisition costs make the application of drone fleets attractive for a wide variety of tasks. In agriculture, disaster management, search and rescue operations, commercial and military applications, the advantage of applying a fleet of drones originates from their ability to cooperate autonomously. Multi-Agent Reinforcement Learning approaches that aim to optimize a neural network based control policy, such as the best performing actor-critic policy gradient algorithms, struggle to effectively back-propagate errors of distinct rewards signal sources and tend to favor lucrative signals while neglecting coordination and exploitation of previously learned similarities. We propose a Multi-Critic Policy Optimization architecture with multiple value estimating networks and a novel advantage function that optimizes a stochastic actor policy network to achieve optimal coordination of agents. Consequently, we apply the algorithm to several tasks that require the collaboration of multiple drones in a physics-based reinforcement learning environment. Our approach achieves a stable policy network update and similarity in reward signal development for an increasing number of agents. The resulting policy achieves optimal coordination and compliance with constraints such as collision avoidance.

PDF Abstract