Normalized Loss Functions for Deep Learning with Noisy Labels

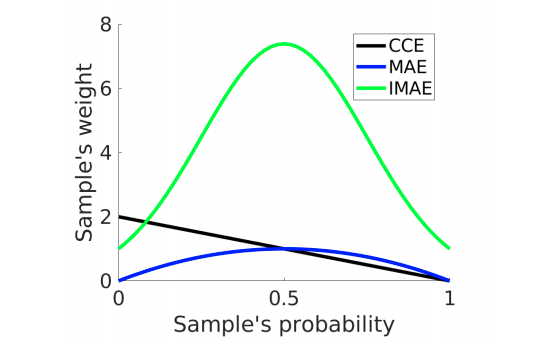

Robust loss functions are essential for training accurate deep neural networks (DNNs) in the presence of noisy (incorrect) labels. It has been shown that the commonly used Cross Entropy (CE) loss is not robust to noisy labels. Whilst new loss functions have been designed, they are only partially robust. In this paper, we theoretically show by applying a simple normalization that: any loss can be made robust to noisy labels. However, in practice, simply being robust is not sufficient for a loss function to train accurate DNNs. By investigating several robust loss functions, we find that they suffer from a problem of underfitting. To address this, we propose a framework to build robust loss functions called Active Passive Loss (APL). APL combines two robust loss functions that mutually boost each other. Experiments on benchmark datasets demonstrate that the family of new loss functions created by our APL framework can consistently outperform state-of-the-art methods by large margins, especially under large noise rates such as 60% or 80% incorrect labels.

PDF Abstract ICML 2020 PDFCode

Results from the Paper

Ranked #30 on

Image Classification

on mini WebVision 1.0

(ImageNet Top-1 Accuracy metric)

Ranked #30 on

Image Classification

on mini WebVision 1.0

(ImageNet Top-1 Accuracy metric)

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100

WebVision

WebVision