Rocket Launching: A Universal and Efficient Framework for Training Well-performing Light Net

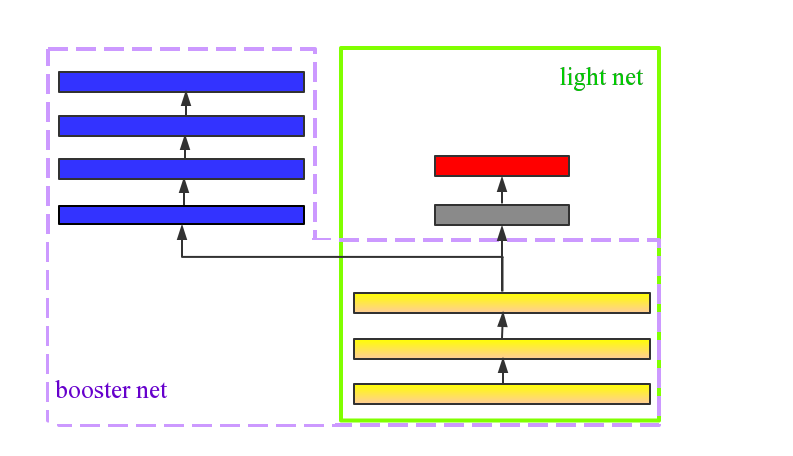

Models applied on real time response task, like click-through rate (CTR) prediction model, require high accuracy and rigorous response time. Therefore, top-performing deep models of high depth and complexity are not well suited for these applications with the limitations on the inference time. In order to further improve the neural networks' performance given the time and computational limitations, we propose an approach that exploits a cumbersome net to help train the lightweight net for prediction. We dub the whole process rocket launching, where the cumbersome booster net is used to guide the learning of the target light net throughout the whole training process. We analyze different loss functions aiming at pushing the light net to behave similarly to the booster net, and adopt the loss with best performance in our experiments. We use one technique called gradient block to improve the performance of the light net and booster net further. Experiments on benchmark datasets and real-life industrial advertisement data present that our light model can get performance only previously achievable with more complex models.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100

SVHN

SVHN