Selective Network Linearization for Efficient Private Inference

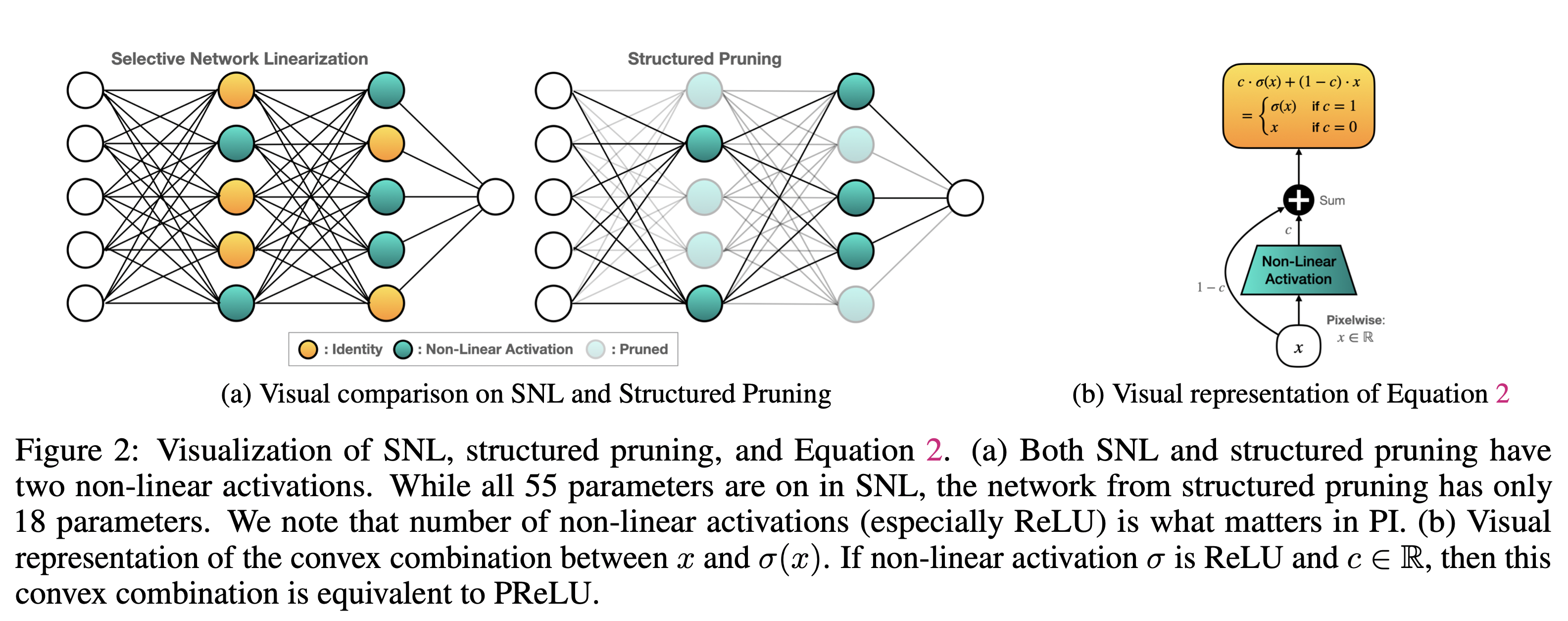

Private inference (PI) enables inference directly on cryptographically secure data.While promising to address many privacy issues, it has seen limited use due to extreme runtimes. Unlike plaintext inference, where latency is dominated by FLOPs, in PI non-linear functions (namely ReLU) are the bottleneck. Thus, practical PI demands novel ReLU-aware optimizations. To reduce PI latency we propose a gradient-based algorithm that selectively linearizes ReLUs while maintaining prediction accuracy. We evaluate our algorithm on several standard PI benchmarks. The results demonstrate up to $4.25\%$ more accuracy (iso-ReLU count at 50K) or $2.2\times$ less latency (iso-accuracy at 70\%) than the current state of the art and advance the Pareto frontier across the latency-accuracy space. To complement empirical results, we present a "no free lunch" theorem that sheds light on how and when network linearization is possible while maintaining prediction accuracy. Public code is available at \url{https://github.com/NYU-DICE-Lab/selective_network_linearization}.

PDF Abstract