Visually Grounded Reasoning across Languages and Cultures

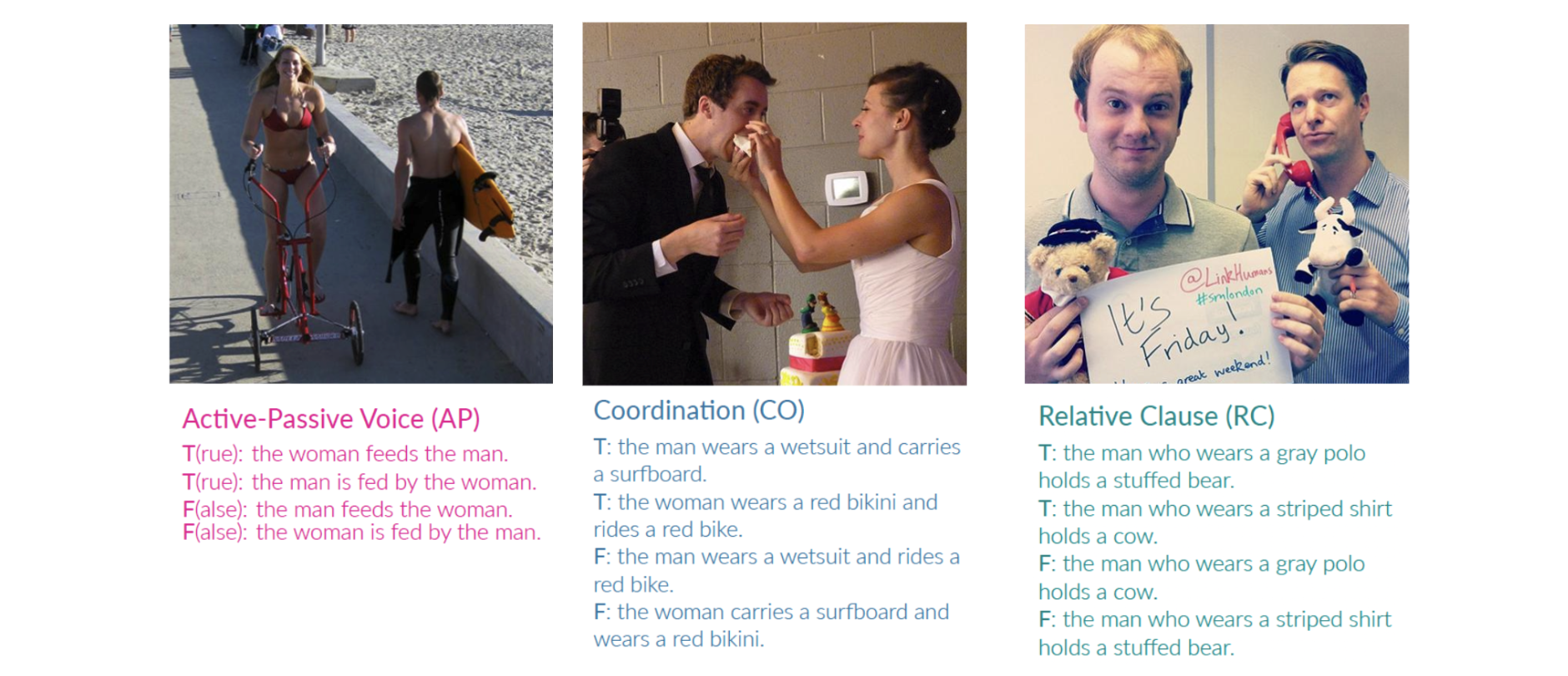

The design of widespread vision-and-language datasets and pre-trained encoders directly adopts, or draws inspiration from, the concepts and images of ImageNet. While one can hardly overestimate how much this benchmark contributed to progress in computer vision, it is mostly derived from lexical databases and image queries in English, resulting in source material with a North American or Western European bias. Therefore, we devise a new protocol to construct an ImageNet-style hierarchy representative of more languages and cultures. In particular, we let the selection of both concepts and images be entirely driven by native speakers, rather than scraping them automatically. Specifically, we focus on a typologically diverse set of languages, namely, Indonesian, Mandarin Chinese, Swahili, Tamil, and Turkish. On top of the concepts and images obtained through this new protocol, we create a multilingual dataset for {M}ulticultur{a}l {R}easoning over {V}ision and {L}anguage (MaRVL) by eliciting statements from native speaker annotators about pairs of images. The task consists of discriminating whether each grounded statement is true or false. We establish a series of baselines using state-of-the-art models and find that their cross-lingual transfer performance lags dramatically behind supervised performance in English. These results invite us to reassess the robustness and accuracy of current state-of-the-art models beyond a narrow domain, but also open up new exciting challenges for the development of truly multilingual and multicultural systems.

PDF Abstract EMNLP 2021 PDF EMNLP 2021 Abstract| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Zero-Shot Cross-Lingual Transfer | MaRVL | xUNITER | Accuracy (%) | 56.1 | # 1 | |

| Zero-Shot Cross-Lingual Visual Reasoning | MaRVL | xUNITER | Accuracy (%) | 56.1 | # 7 | |

| Zero-Shot Cross-Lingual Visual Reasoning | MaRVL | mUNITER | Accuracy (%) | 54.0 | # 10 | |

| Zero-Shot Cross-Lingual Transfer | MaRVL | mUNITER | Accuracy (%) | 54.0 | # 2 |

MaRVL

MaRVL

NLVR

NLVR

IGLUE

IGLUE