Formation Energy

29 papers with code • 14 benchmarks • 8 datasets

On the QM9 dataset the numbers reported in the table are the mean absolute error in eV on the target variable U0 divided by U0's chemical accuracy, which is equal to 0.043.

Libraries

Use these libraries to find Formation Energy models and implementationsMost implemented papers

Periodic Graph Transformers for Crystal Material Property Prediction

Our Matformer is designed to be invariant to periodicity and can capture repeating patterns explicitly.

Linear-scaling kernels for protein sequences and small molecules outperform deep learning while providing uncertainty quantitation and improved interpretability

We compare the performance of xGPR with the reported performance of various deep learning models on 20 benchmarks, including small molecule, protein sequence and tabular data.

TensorNet: Cartesian Tensor Representations for Efficient Learning of Molecular Potentials

The development of efficient machine learning models for molecular systems representation is becoming crucial in scientific research.

Matbench Discovery -- A framework to evaluate machine learning crystal stability predictions

The top 3 models are UIPs, the winning methodology for ML-guided materials discovery, achieving F1 scores of ~0. 6 for crystal stability classification and discovery acceleration factors (DAF) of up to 5x on the first 10k most stable predictions compared to dummy selection from our test set.

MT-CGCNN: Integrating Crystal Graph Convolutional Neural Network with Multitask Learning for Material Property Prediction

Some of the major challenges involved in developing such models are, (i) limited availability of materials data as compared to other fields, (ii) lack of universal descriptor of materials to predict its various properties.

DScribe: Library of Descriptors for Machine Learning in Materials Science

DScribe is a software package for machine learning that provides popular feature transformations ("descriptors") for atomistic materials simulations.

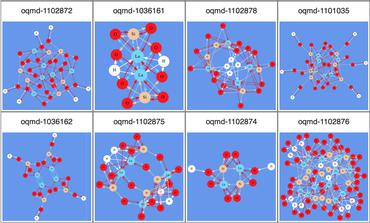

Crystal Graph Neural Networks for Data Mining in Materials Science

This paper proposes crystal graph neural networks (CGNNs) that use no bond distances, and introduces a scale-invariant graph coordinator that makes up crystal graphs for the CGNN models to be trained on the dataset based on a theoretical materials database.

Heterogeneous Molecular Graph Neural Networks for Predicting Molecule Properties

As they carry great potential for modeling complex interactions, graph neural network (GNN)-based methods have been widely used to predict quantum mechanical properties of molecules.

Atomistic Line Graph Neural Network for Improved Materials Property Predictions

Graph neural networks (GNN) have been shown to provide substantial performance improvements for atomistic material representation and modeling compared with descriptor-based machine learning models.

Distributed Representations of Atoms and Materials for Machine Learning

To build effective models of the chemistry of materials, useful machine-based representations of atoms and their compounds are required.

Materials Project

Materials Project

MD17

MD17

QM9

QM9

OQMD v1.2

OQMD v1.2

OQM9HK

OQM9HK

WBM

WBM