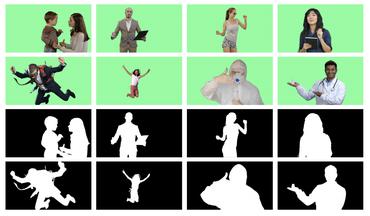

Video Matting

13 papers with code • 0 benchmarks • 3 datasets

Image credit: https://arxiv.org/pdf/2012.07810v1.pdf

Benchmarks

These leaderboards are used to track progress in Video Matting

Latest papers

Video Instance Matting

To remedy this deficiency, we propose Video Instance Matting~(VIM), that is, estimating alpha mattes of each instance at each frame of a video sequence.

OmnimatteRF: Robust Omnimatte with 3D Background Modeling

Video matting has broad applications, from adding interesting effects to casually captured movies to assisting video production professionals.

Adaptive Human Matting for Dynamic Videos

The most recent efforts in video matting have focused on eliminating trimap dependency since trimap annotations are expensive and trimap-based methods are less adaptable for real-time applications.

Ultrahigh Resolution Image/Video Matting With Spatio-Temporal Sparsity

Instead, our method resorts to spatial and temporal sparsity for solving general UHR matting.

End-to-End Video Matting With Trimap Propagation

Although recent studies exploit video object segmentation methods to propagate the given trimaps, they suffer inconsistent results.

VMFormer: End-to-End Video Matting with Transformer

In this paper, we propose VMFormer: a transformer-based end-to-end method for video matting.

One-Trimap Video Matting

A key of OTVM is the joint modeling of trimap propagation and alpha prediction.

MODNet-V: Improving Portrait Video Matting via Background Restoration

In this work, we observe that instead of asking the user to explicitly provide a background image, we may recover it from the input video itself.

Robust High-Resolution Video Matting with Temporal Guidance

We introduce a robust, real-time, high-resolution human video matting method that achieves new state-of-the-art performance.

Attention-guided Temporally Coherent Video Object Matting

Experimental results show that our method can generate high-quality alpha mattes for various videos featuring appearance change, occlusion, and fast motion.

VideoMatte240K

VideoMatte240K

VideoMatting108

VideoMatting108

PhotoMatte85

PhotoMatte85