Video Salient Object Detection

20 papers with code • 10 benchmarks • 4 datasets

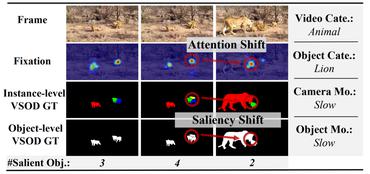

Video salient object detection (VSOD) is significantly essential for understanding the underlying mechanism behind HVS during free-viewing in general and instrumental to a wide range of real-world applications, e.g., video segmentation, video captioning, video compression, autonomous driving, robotic interaction, weakly supervised attention. Besides its academic value and practical significance, VSOD presents great difficulties due to the challenges carried by video data (diverse motion patterns, occlusions, blur, large object deformations, etc.) and the inherent complexity of human visual attention behavior (i.e., selective attention allocation, attention shift) during dynamic scenes. Online benchmark: http://dpfan.net/davsod.

( Image credit: Shifting More Attention to Video Salient Object Detection, CVPR2019-Best Paper Finalist )

Most implemented papers

Motion Guided Attention for Video Salient Object Detection

In this paper, we develop a multi-task motion guided video salient object detection network, which learns to accomplish two sub-tasks using two sub-networks, one sub-network for salient object detection in still images and the other for motion saliency detection in optical flow images.

Depth-Cooperated Trimodal Network for Video Salient Object Detection

However, existing video salient object detection (VSOD) methods only utilize spatiotemporal information and seldom exploit depth information for detection.

Saliency-Aware Geodesic Video Object Segmentation

Building on the observation that foreground areas are surrounded by the regions with high spatiotemporal edge values, geodesic distance provides an initial estimation for foreground and background.

Real-Time Salient Object Detection With a Minimum Spanning Tree

In this paper, we present a real-time salient object detection system based on the minimum spanning tree.

Structure-measure: A New Way to Evaluate Foreground Maps

Our new measure simultaneously evaluates region-aware and object-aware structural similarity between a SM and a GT map.

Pyramid Dilated Deeper ConvLSTM for Video Salient Object Detection

This paper proposes a fast video salient object detection model, based on a novel recurrent network architecture, named Pyramid Dilated Bidirectional ConvLSTM (PDB-ConvLSTM).

Shifting More Attention to Video Salient Object Detection

This is the first work that explicitly emphasizes the challenge of saliency shift, i. e., the video salient object(s) may dynamically change.

Semi-Supervised Video Salient Object Detection Using Pseudo-Labels

Specifically, we present an effective video saliency detector that consists of a spatial refinement network and a spatiotemporal module.

Exploring Rich and Efficient Spatial Temporal Interactions for Real Time Video Salient Object Detection

In this way, even though the overall video saliency quality is heavily dependent on its spatial branch, however, the performance of the temporal branch still matter.

A Novel Video Salient Object Detection Method via Semi-supervised Motion Quality Perception

Consequently, we can achieve a significant performance improvement by using this new training set to start a new round of network training.

FBMS

FBMS

SegTrack-v2

SegTrack-v2

FBMS-59

FBMS-59

ViSal

ViSal