MIAP (More Inclusive Annotations for People)

Introduced by Schumann et al. in A Step Toward More Inclusive People Annotations for FairnessMIAP is a dataset created by obtaining a new set of annotations on a subset of the Open Images dataset, containing bounding boxes and attributes for all of the people visible in those images, as the original Open Images dataset annotations are not exhaustive, with bounding boxes and attribute labels for only a subset of the classes in each image.

The MIAP dataset focuses on enabling ML Fairness research. It provides additional annotations for 100,000 (70k from training and 30k from validation/test) images that contain at least one person bounding box in the original annotations.

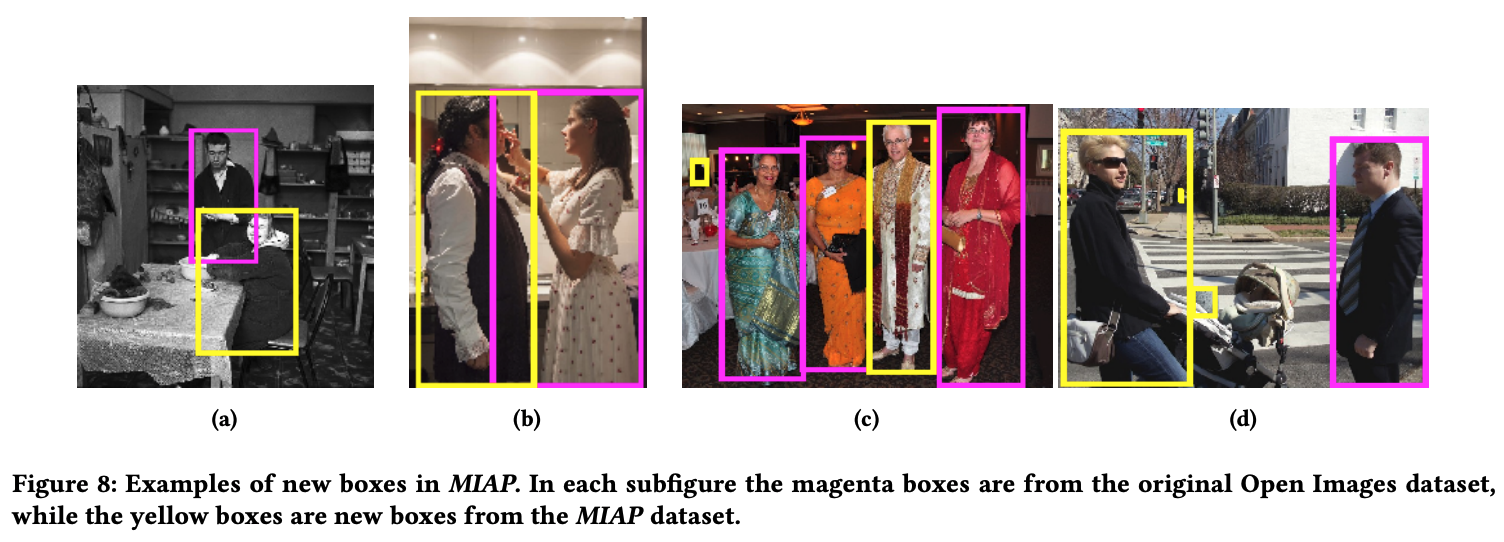

These additional annotations provide exhaustive bounding boxes for all people in an image. Person boxes are further annotated with attribute labels for fairness research. Annotated attributes include the human perceived gender presentation (predominantly feminine, predominantly masculine, and unknown) and perceived age range (young, middle, older, and unknown) of the localized person. This procedure adds nearly 100,000 new boxes that were not annotated under the original labeling pipeline.

Annotations on the exhaustive set enable research into the fairness properties of models trained on partial annotations and the pipelines that produce these annotations.

Papers

| Paper | Code | Results | Date | Stars |

|---|