Stochastic Optimization

Stochastic Optimization

RMSProp

RMSProp is an unpublished adaptive learning rate optimizer proposed by Geoff Hinton. The motivation is that the magnitude of gradients can differ for different weights, and can change during learning, making it hard to choose a single global learning rate. RMSProp tackles this by keeping a moving average of the squared gradient and adjusting the weight updates by this magnitude. The gradient updates are performed as:

$$E\left[g^{2}\right]_{t} = \gamma E\left[g^{2}\right]_{t-1} + \left(1 - \gamma\right) g^{2}_{t}$$

$$\theta_{t+1} = \theta_{t} - \frac{\eta}{\sqrt{E\left[g^{2}\right]_{t} + \epsilon}}g_{t}$$

Hinton suggests $\gamma=0.9$, with a good default for $\eta$ as $0.001$.

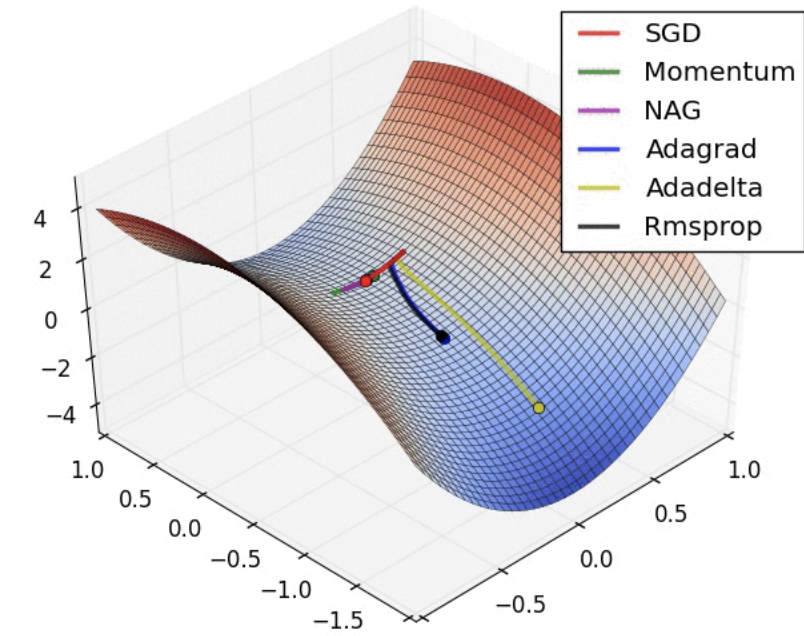

Image: Alec Radford

Papers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Image Classification | 86 | 13.69% |

| Classification | 35 | 5.57% |

| General Classification | 35 | 5.57% |

| Object Detection | 29 | 4.62% |

| Semantic Segmentation | 26 | 4.14% |

| Reinforcement Learning (RL) | 12 | 1.91% |

| Quantization | 10 | 1.59% |

| Multi-Task Learning | 9 | 1.43% |

| Instance Segmentation | 9 | 1.43% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

| 🤖 No Components Found | You can add them if they exist; e.g. Mask R-CNN uses RoIAlign |