Semantic Segmentation

5260 papers with code • 126 benchmarks • 313 datasets

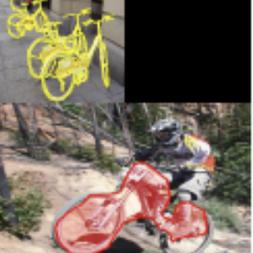

Semantic Segmentation is a computer vision task in which the goal is to categorize each pixel in an image into a class or object. The goal is to produce a dense pixel-wise segmentation map of an image, where each pixel is assigned to a specific class or object. Some example benchmarks for this task are Cityscapes, PASCAL VOC and ADE20K. Models are usually evaluated with the Mean Intersection-Over-Union (Mean IoU) and Pixel Accuracy metrics.

( Image credit: CSAILVision )

Libraries

Use these libraries to find Semantic Segmentation models and implementationsSubtasks

-

Tumor Segmentation

Tumor Segmentation

-

Panoptic Segmentation

Panoptic Segmentation

-

3D Semantic Segmentation

3D Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

-

Scene Segmentation

Scene Segmentation

-

Semi-Supervised Semantic Segmentation

Semi-Supervised Semantic Segmentation

-

Real-Time Semantic Segmentation

Real-Time Semantic Segmentation

-

3D Part Segmentation

3D Part Segmentation

-

Unsupervised Semantic Segmentation

Unsupervised Semantic Segmentation

-

Road Segmentation

Road Segmentation

-

One-Shot Segmentation

One-Shot Segmentation

-

Bird's-Eye View Semantic Segmentation

Bird's-Eye View Semantic Segmentation

-

Crack Segmentation

Crack Segmentation

-

UNET Segmentation

UNET Segmentation

-

Universal Segmentation

Universal Segmentation

-

Class-Incremental Semantic Segmentation

Class-Incremental Semantic Segmentation

-

Polyp Segmentation

Polyp Segmentation

-

Vision-Language Segmentation

Vision-Language Segmentation

-

4D Spatio Temporal Semantic Segmentation

4D Spatio Temporal Semantic Segmentation

-

Histopathological Segmentation

Histopathological Segmentation

-

Attentive segmentation networks

Attentive segmentation networks

-

Text-Line Extraction

Text-Line Extraction

-

Aerial Video Semantic Segmentation

Aerial Video Semantic Segmentation

-

Amodal Panoptic Segmentation

Amodal Panoptic Segmentation

-

Robust BEV Map Segmentation

Robust BEV Map Segmentation

Most implemented papers

U-Net: Convolutional Networks for Biomedical Image Segmentation

There is large consent that successful training of deep networks requires many thousand annotated training samples.

Deep Residual Learning for Image Recognition

Deep residual nets are foundations of our submissions to ILSVRC & COCO 2015 competitions, where we also won the 1st places on the tasks of ImageNet detection, ImageNet localization, COCO detection, and COCO segmentation.

Mask R-CNN

Our approach efficiently detects objects in an image while simultaneously generating a high-quality segmentation mask for each instance.

MobileNetV2: Inverted Residuals and Linear Bottlenecks

In this paper we describe a new mobile architecture, MobileNetV2, that improves the state of the art performance of mobile models on multiple tasks and benchmarks as well as across a spectrum of different model sizes.

MMDetection: Open MMLab Detection Toolbox and Benchmark

In this paper, we introduce the various features of this toolbox.

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

While the Transformer architecture has become the de-facto standard for natural language processing tasks, its applications to computer vision remain limited.

PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation

Point cloud is an important type of geometric data structure.

FCOS: Fully Convolutional One-Stage Object Detection

By eliminating the predefined set of anchor boxes, FCOS completely avoids the complicated computation related to anchor boxes such as calculating overlapping during training.

Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation

The former networks are able to encode multi-scale contextual information by probing the incoming features with filters or pooling operations at multiple rates and multiple effective fields-of-view, while the latter networks can capture sharper object boundaries by gradually recovering the spatial information.

Rethinking Atrous Convolution for Semantic Image Segmentation

To handle the problem of segmenting objects at multiple scales, we design modules which employ atrous convolution in cascade or in parallel to capture multi-scale context by adopting multiple atrous rates.

MS COCO

MS COCO

Cityscapes

Cityscapes

KITTI

KITTI

ShapeNet

ShapeNet

ScanNet

ScanNet

ADE20K

ADE20K

NYUv2

NYUv2

DAVIS

DAVIS

SYNTHIA

SYNTHIA

EuroSAT

EuroSAT