AdvKnn: Adversarial Attacks On K-Nearest Neighbor Classifiers With Approximate Gradients

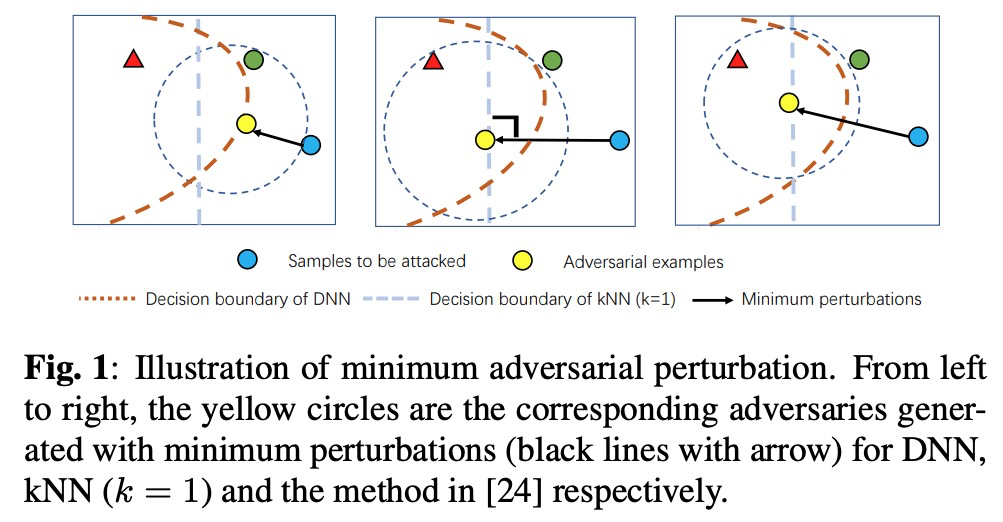

Deep neural networks have been shown to be vulnerable to adversarial examples---maliciously crafted examples that can trigger the target model to misbehave by adding imperceptible perturbations. Existing attack methods for k-nearest neighbor~(kNN) based algorithms either require large perturbations or are not applicable for large k. To handle this problem, this paper proposes a new method called AdvKNN for evaluating the adversarial robustness of kNN-based models. Firstly, we propose a deep kNN block to approximate the output of kNN methods, which is differentiable thus can provide gradients for attacks to cross the decision boundary with small distortions. Second, a new consistency learning for distribution instead of classification is proposed for the effectiveness in distribution based methods. Extensive experimental results indicate that the proposed method significantly outperforms state of the art in terms of attack success rate and the added perturbations.

PDF Abstract

MNIST

MNIST

SVHN

SVHN

Fashion-MNIST

Fashion-MNIST