Debiasing Convolutional Neural Networks via Meta Orthogonalization

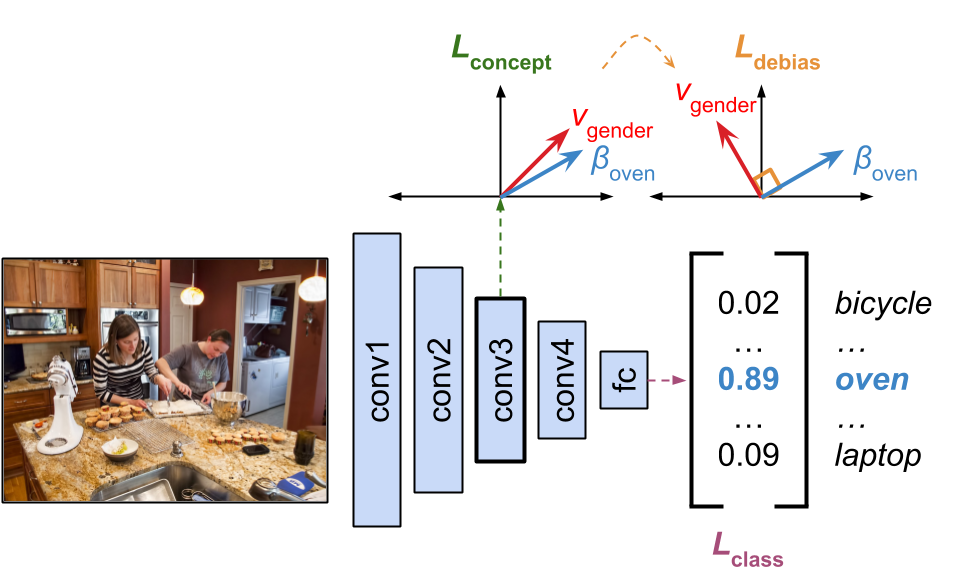

While deep learning models often achieve strong task performance, their successes are hampered by their inability to disentangle spurious correlations from causative factors, such as when they use protected attributes (e.g., race, gender, etc.) to make decisions. In this work, we tackle the problem of debiasing convolutional neural networks (CNNs) in such instances. Building off of existing work on debiasing word embeddings and model interpretability, our Meta Orthogonalization method encourages the CNN representations of different concepts (e.g., gender and class labels) to be orthogonal to one another in activation space while maintaining strong downstream task performance. Through a variety of experiments, we systematically test our method and demonstrate that it significantly mitigates model bias and is competitive against current adversarial debiasing methods.

PDF Abstract

MS COCO

MS COCO

Places

Places