Decompose to Adapt: Cross-domain Object Detection via Feature Disentanglement

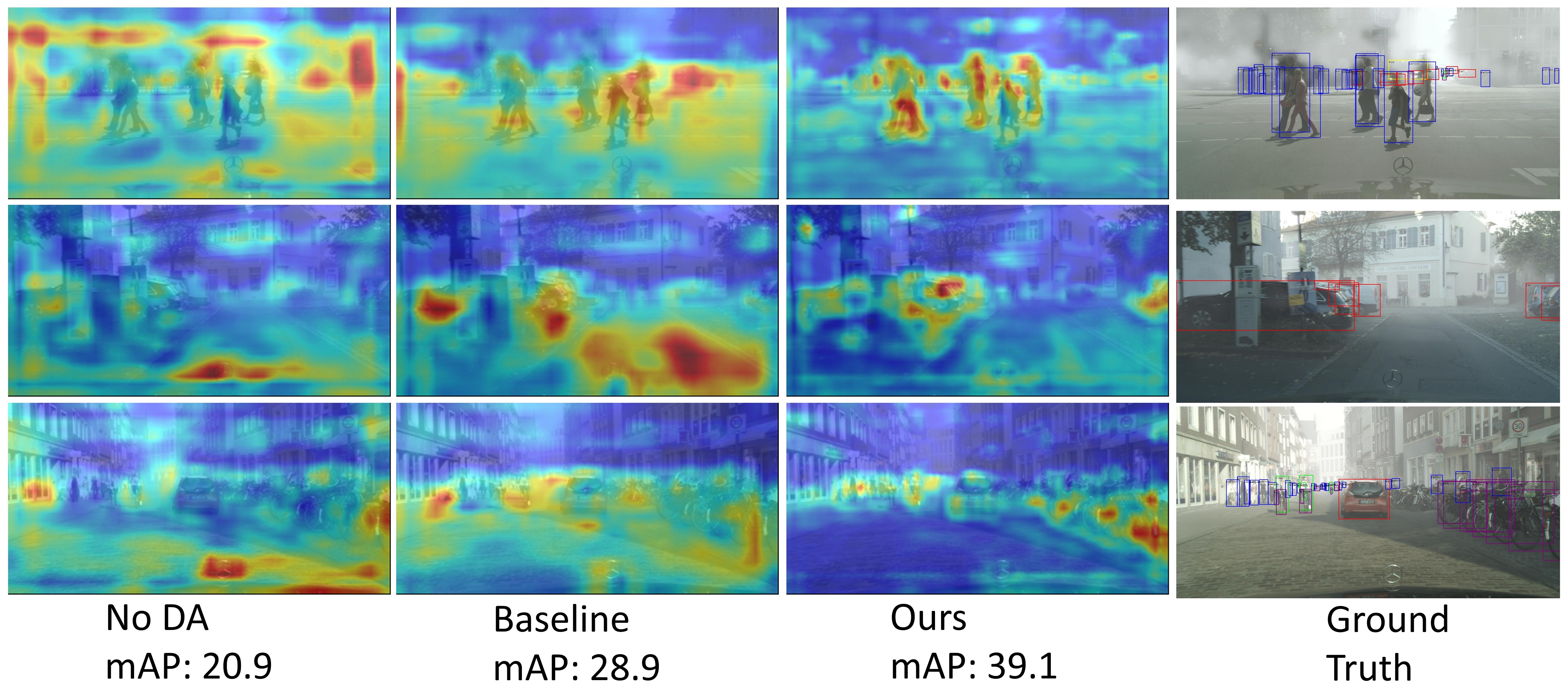

Recent advances in unsupervised domain adaptation (UDA) techniques have witnessed great success in cross-domain computer vision tasks, enhancing the generalization ability of data-driven deep learning architectures by bridging the domain distribution gaps. For the UDA-based cross-domain object detection methods, the majority of them alleviate the domain bias by inducing the domain-invariant feature generation via adversarial learning strategy. However, their domain discriminators have limited classification ability due to the unstable adversarial training process. Therefore, the extracted features induced by them cannot be perfectly domain-invariant and still contain domain-private factors, bringing obstacles to further alleviate the cross-domain discrepancy. To tackle this issue, we design a Domain Disentanglement Faster-RCNN (DDF) to eliminate the source-specific information in the features for detection task learning. Our DDF method facilitates the feature disentanglement at the global and local stages, with a Global Triplet Disentanglement (GTD) module and an Instance Similarity Disentanglement (ISD) module, respectively. By outperforming state-of-the-art methods on four benchmark UDA object detection tasks, our DDF method is demonstrated to be effective with wide applicability.

PDF Abstract

Cityscapes

Cityscapes

KITTI

KITTI

Foggy Cityscapes

Foggy Cityscapes

Sim10k

Sim10k