Multiview Textured Mesh Recovery by Differentiable Rendering

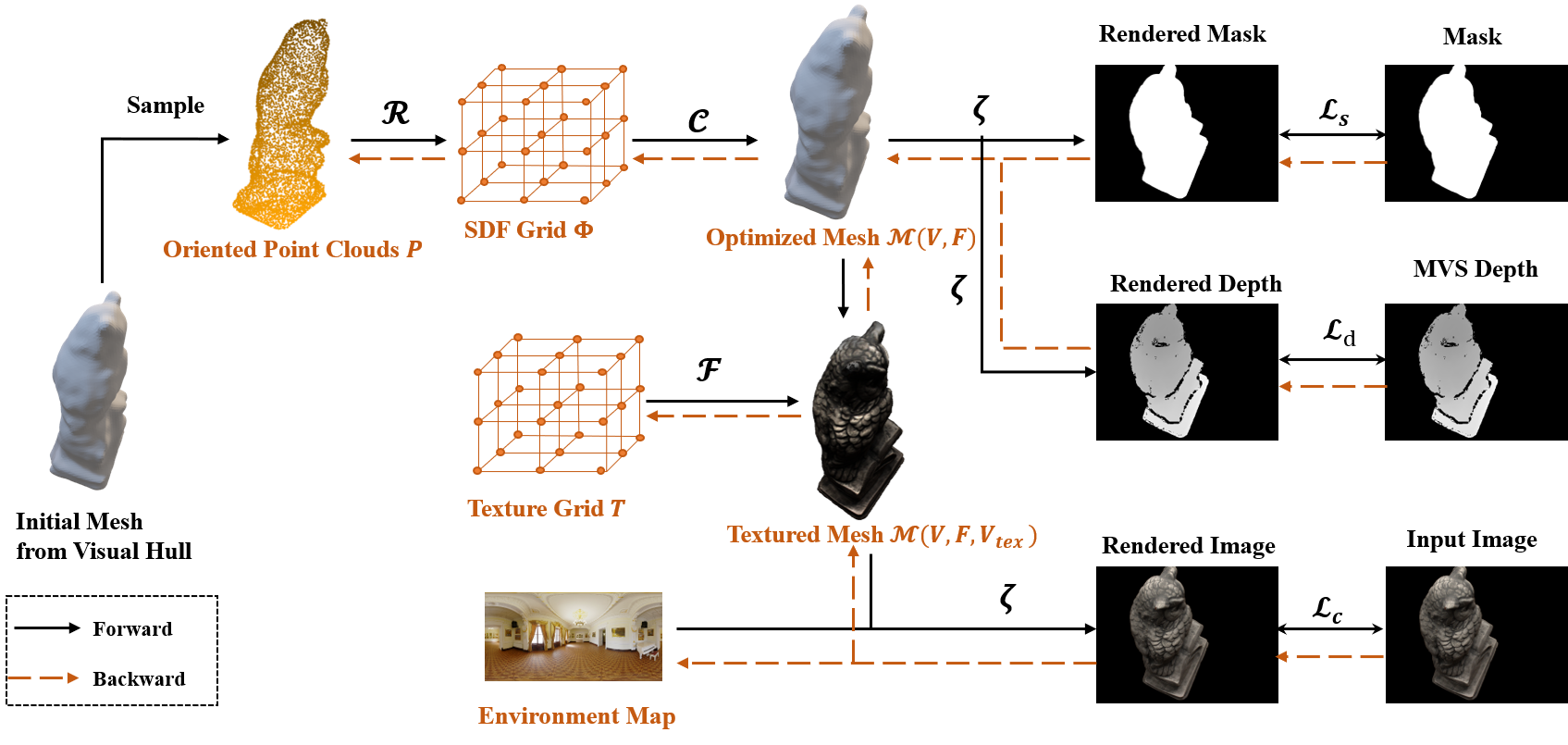

Although having achieved the promising results on shape and color recovery through self-supervision, the multi-layer perceptrons-based methods usually suffer from heavy computational cost on learning the deep implicit surface representation. Since rendering each pixel requires a forward network inference, it is very computational intensive to synthesize a whole image. To tackle these challenges, we propose an effective coarse-to-fine approach to recover the textured mesh from multi-views in this paper. Specifically, a differentiable Poisson Solver is employed to represent the object's shape, which is able to produce topology-agnostic and watertight surfaces. To account for depth information, we optimize the shape geometry by minimizing the differences between the rendered mesh and the predicted depth from multi-view stereo. In contrast to the implicit neural representation on shape and color, we introduce a physically based inverse rendering scheme to jointly estimate the environment lighting and object's reflectance, which is able to render the high resolution image at real-time. The texture of the reconstructed mesh is interpolated from a learnable dense texture grid. We have conducted the extensive experiments on several multi-view stereo datasets, whose promising results demonstrate the efficacy of our proposed approach. The code is available at https://github.com/l1346792580123/diff.

PDF Abstract

DTU

DTU