Exploring Generative Adversarial Networks for Image-to-Image Translation in STEM Simulation

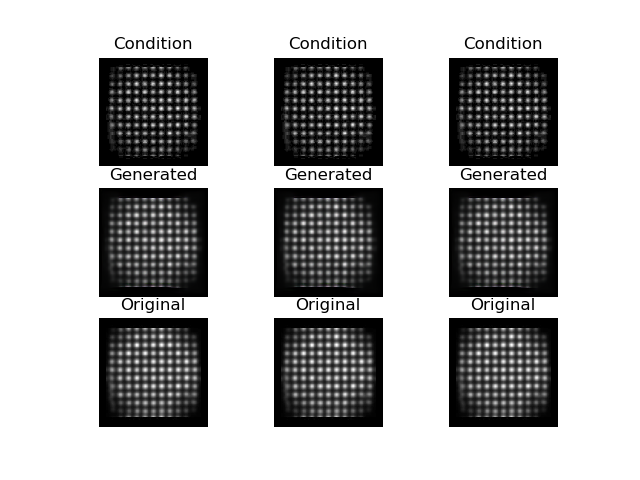

The use of accurate scanning transmission electron microscopy (STEM) image simulation methods require large computation times that can make their use infeasible for the simulation of many images. Other simulation methods based on linear imaging models, such as the convolution method, are much faster but are too inaccurate to be used in application. In this paper, we explore deep learning models that attempt to translate a STEM image produced by the convolution method to a prediction of the high accuracy multislice image. We then compare our results to those of regression methods. We find that using the deep learning model Generative Adversarial Network (GAN) provides us with the best results and performs at a similar accuracy level to previous regression models on the same dataset. Codes and data for this project can be found in this GitHub repository, https://github.com/uw-cmg/GAN-STEM-Conv2MultiSlice.

PDF Abstract