GATCluster: Self-Supervised Gaussian-Attention Network for Image Clustering

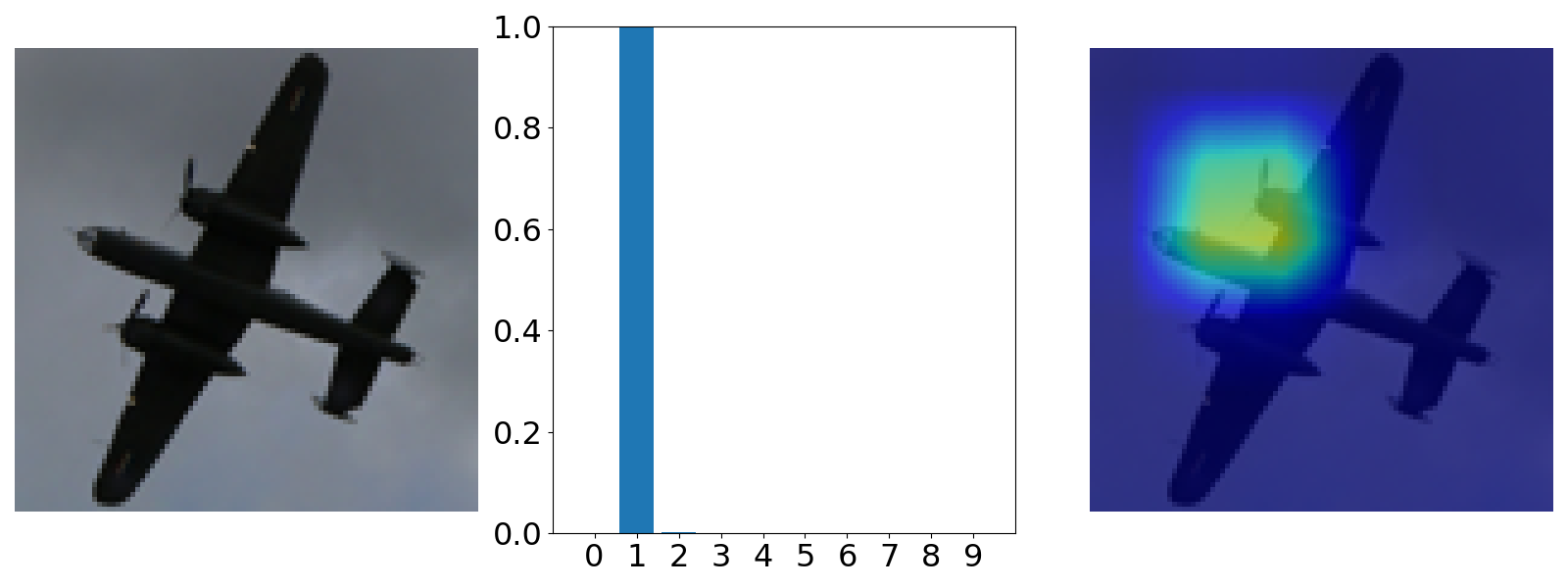

We propose a self-supervised Gaussian ATtention network for image Clustering (GATCluster). Rather than extracting intermediate features first and then performing the traditional clustering algorithm, GATCluster directly outputs semantic cluster labels without further post-processing. Theoretically, we give a Label Feature Theorem to guarantee the learned features are one-hot encoded vectors, and the trivial solutions are avoided. To train the GATCluster in a completely unsupervised manner, we design four self-learning tasks with the constraints of transformation invariance, separability maximization, entropy analysis, and attention mapping. Specifically, the transformation invariance and separability maximization tasks learn the relationships between sample pairs. The entropy analysis task aims to avoid trivial solutions. To capture the object-oriented semantics, we design a self-supervised attention mechanism that includes a parameterized attention module and a soft-attention loss. All the guiding signals for clustering are self-generated during the training process. Moreover, we develop a two-step learning algorithm that is memory-efficient for clustering large-size images. Extensive experiments demonstrate the superiority of our proposed method in comparison with the state-of-the-art image clustering benchmarks. Our code has been made publicly available at https://github.com/niuchuangnn/GATCluster.

PDF Abstract ECCV 2020 PDF ECCV 2020 Abstract

CIFAR-10

CIFAR-10

STL-10

STL-10