HOnnotate: A method for 3D Annotation of Hand and Object Poses

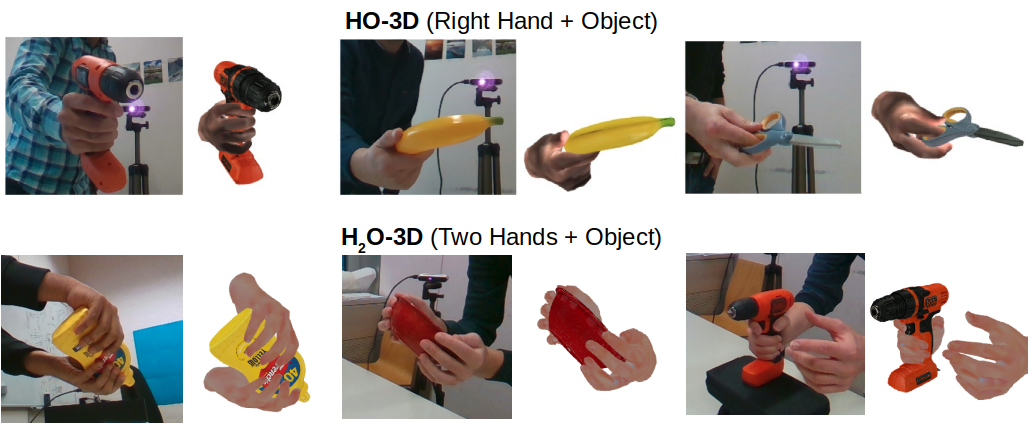

We propose a method for annotating images of a hand manipulating an object with the 3D poses of both the hand and the object, together with a dataset created using this method. Our motivation is the current lack of annotated real images for this problem, as estimating the 3D poses is challenging, mostly because of the mutual occlusions between the hand and the object. To tackle this challenge, we capture sequences with one or several RGB-D cameras and jointly optimize the 3D hand and object poses over all the frames simultaneously. This method allows us to automatically annotate each frame with accurate estimates of the poses, despite large mutual occlusions. With this method, we created HO-3D, the first markerless dataset of color images with 3D annotations for both the hand and object. This dataset is currently made of 77,558 frames, 68 sequences, 10 persons, and 10 objects. Using our dataset, we develop a single RGB image-based method to predict the hand pose when interacting with objects under severe occlusions and show it generalizes to objects not seen in the dataset.

PDF Abstract CVPR 2020 PDF CVPR 2020 Abstract

HO-3D

HO-3D

YCB-Video

YCB-Video

FreiHAND

FreiHAND