It's All in the Head: Representation Knowledge Distillation through Classifier Sharing

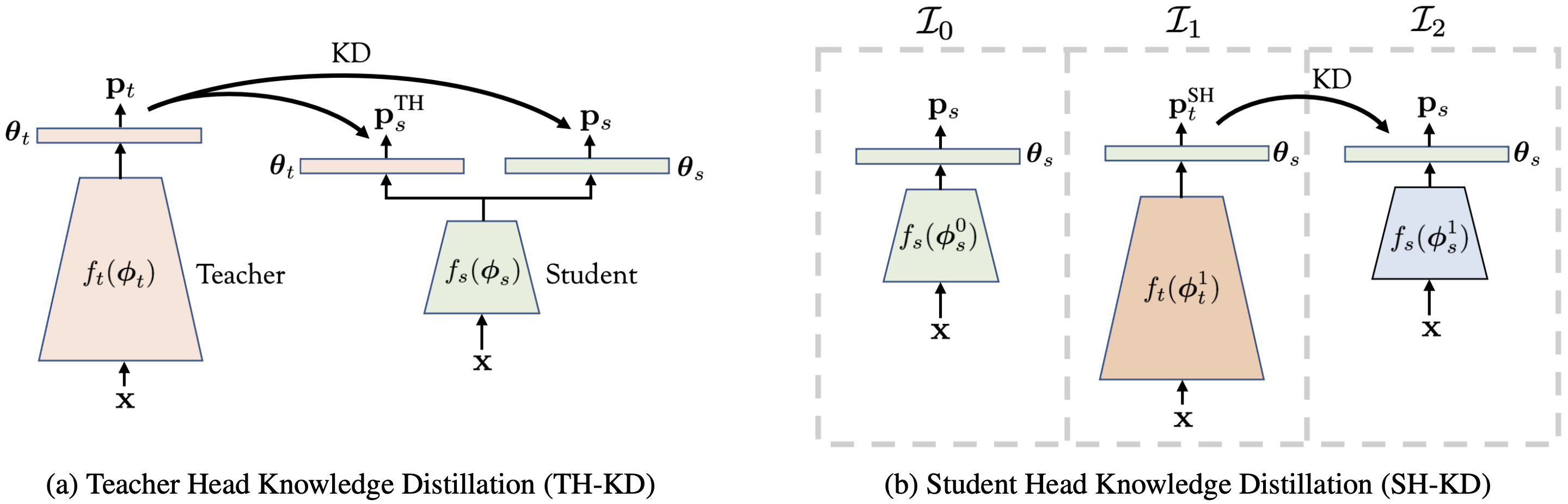

Representation knowledge distillation aims at transferring rich information from one model to another. Common approaches for representation distillation mainly focus on the direct minimization of distance metrics between the models' embedding vectors. Such direct methods may be limited in transferring high-order dependencies embedded in the representation vectors, or in handling the capacity gap between the teacher and student models. Moreover, in standard knowledge distillation, the teacher is trained without awareness of the student's characteristics and capacity. In this paper, we explore two mechanisms for enhancing representation distillation using classifier sharing between the teacher and student. We first investigate a simple scheme where the teacher's classifier is connected to the student backbone, acting as an additional classification head. Then, we propose a student-aware mechanism that asks to tailor the teacher model to a student with limited capacity by training the teacher with a temporary student's head. We analyze and compare these two mechanisms and show their effectiveness on various datasets and tasks, including image classification, fine-grained classification, and face verification. In particular, we achieve state-of-the-art results for face verification on the IJB-C dataset for a MobileFaceNet model: TAR@(FAR=1e-5)=93.7\%. Code is available at https://github.com/Alibaba-MIIL/HeadSharingKD.

PDF Abstract

CIFAR-100

CIFAR-100

IJB-C

IJB-C

FoodX-251

FoodX-251