Learning Structured Representations of Visual Scenes

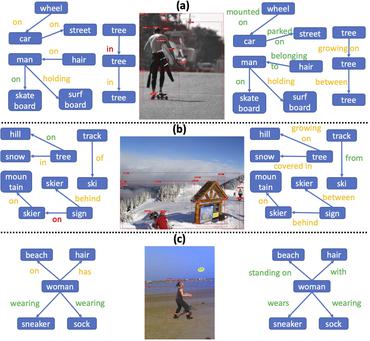

As the intermediate-level representations bridging the two levels, structured representations of visual scenes, such as visual relationships between pairwise objects, have been shown to not only benefit compositional models in learning to reason along with the structures but provide higher interpretability for model decisions. Nevertheless, these representations receive much less attention than traditional recognition tasks, leaving numerous open challenges unsolved. In the thesis, we study how machines can describe the content of the individual image or video with visual relationships as the structured representations. Specifically, we explore how structured representations of visual scenes can be effectively constructed and learned in both the static-image and video settings, with improvements resulting from external knowledge incorporation, bias-reducing mechanism, and enhanced representation models. At the end of this thesis, we also discuss some open challenges and limitations to shed light on future directions of structured representation learning for visual scenes.

PDF Abstract

Visual Question Answering

Visual Question Answering

Visual Genome

Visual Genome

Conceptual Captions

Conceptual Captions

HICO-DET

HICO-DET

VRD

VRD

V-COCO

V-COCO

CAD-120

CAD-120

VidHOI

VidHOI