Scene Graph Generation

111 papers with code • 5 benchmarks • 7 datasets

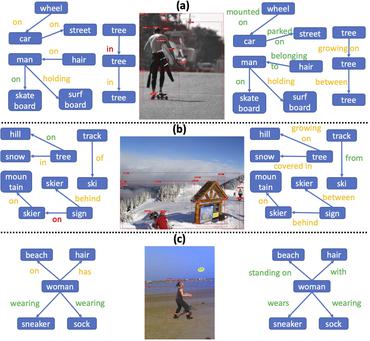

A scene graph is a structured representation of an image, where nodes in a scene graph correspond to object bounding boxes with their object categories, and edges correspond to their pairwise relationships between objects. The task of Scene Graph Generation is to generate a visually-grounded scene graph that most accurately correlates with an image.

Libraries

Use these libraries to find Scene Graph Generation models and implementationsMost implemented papers

Learning to Compose Dynamic Tree Structures for Visual Contexts

We propose to compose dynamic tree structures that place the objects in an image into a visual context, helping visual reasoning tasks such as scene graph generation and visual Q&A.

Unbiased Scene Graph Generation from Biased Training

Today's scene graph generation (SGG) task is still far from practical, mainly due to the severe training bias, e. g., collapsing diverse "human walk on / sit on / lay on beach" into "human on beach".

Scene Graph Generation by Iterative Message Passing

In this work, we explicitly model the objects and their relationships using scene graphs, a visually-grounded graphical structure of an image.

Structured Sparse R-CNN for Direct Scene Graph Generation

The key to our method is a set of learnable triplet queries and a structured triplet detector which could be jointly optimized from the training set in an end-to-end manner.

Pixels to Graphs by Associative Embedding

Graphs are a useful abstraction of image content.

Graph R-CNN for Scene Graph Generation

We propose a novel scene graph generation model called Graph R-CNN, that is both effective and efficient at detecting objects and their relations in images.

LinkNet: Relational Embedding for Scene Graph

In this paper, we present a method that improves scene graph generation by explicitly modeling inter-dependency among the entire object instances.

Graphical Contrastive Losses for Scene Graph Parsing

The first, Entity Instance Confusion, occurs when the model confuses multiple instances of the same type of entity (e. g. multiple cups).

Knowledge-Embedded Routing Network for Scene Graph Generation

More specifically, we show that the statistical correlations between objects appearing in images and their relationships, can be explicitly represented by a structured knowledge graph, and a routing mechanism is learned to propagate messages through the graph to explore their interactions.

Bipartite Graph Network with Adaptive Message Passing for Unbiased Scene Graph Generation

Scene graph generation is an important visual understanding task with a broad range of vision applications.

MS COCO

MS COCO

Visual Genome

Visual Genome

VRD

VRD

3DSSG

3DSSG

3RScan

3RScan

PSG Dataset

PSG Dataset

4D-OR

4D-OR