Multimodal Industrial Anomaly Detection via Hybrid Fusion

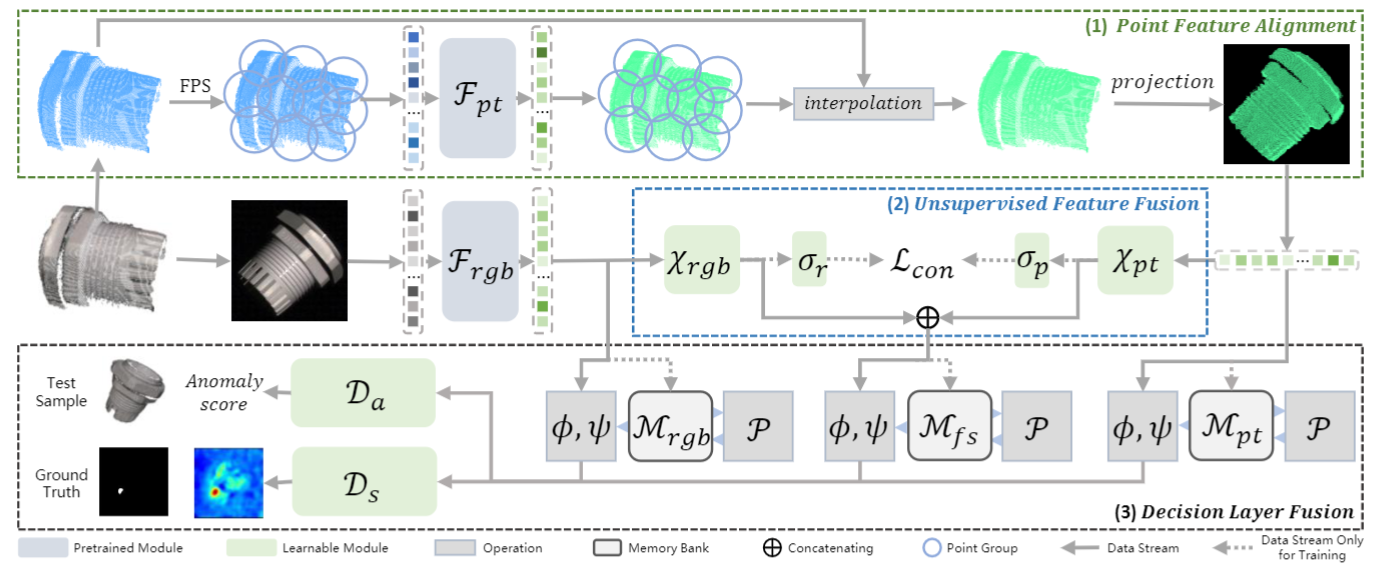

2D-based Industrial Anomaly Detection has been widely discussed, however, multimodal industrial anomaly detection based on 3D point clouds and RGB images still has many untouched fields. Existing multimodal industrial anomaly detection methods directly concatenate the multimodal features, which leads to a strong disturbance between features and harms the detection performance. In this paper, we propose Multi-3D-Memory (M3DM), a novel multimodal anomaly detection method with hybrid fusion scheme: firstly, we design an unsupervised feature fusion with patch-wise contrastive learning to encourage the interaction of different modal features; secondly, we use a decision layer fusion with multiple memory banks to avoid loss of information and additional novelty classifiers to make the final decision. We further propose a point feature alignment operation to better align the point cloud and RGB features. Extensive experiments show that our multimodal industrial anomaly detection model outperforms the state-of-the-art (SOTA) methods on both detection and segmentation precision on MVTec-3D AD dataset. Code is available at https://github.com/nomewang/M3DM.

PDF Abstract CVPR 2023 PDF CVPR 2023 AbstractCode

Datasets

Results from the Paper

Ranked #3 on

RGB+3D Anomaly Detection and Segmentation

on MVTEC 3D-AD

(using extra training data)

Ranked #3 on

RGB+3D Anomaly Detection and Segmentation

on MVTEC 3D-AD

(using extra training data)

MVTEC 3D-AD

MVTEC 3D-AD