NAPA: Neural Art Human Pose Amplifier

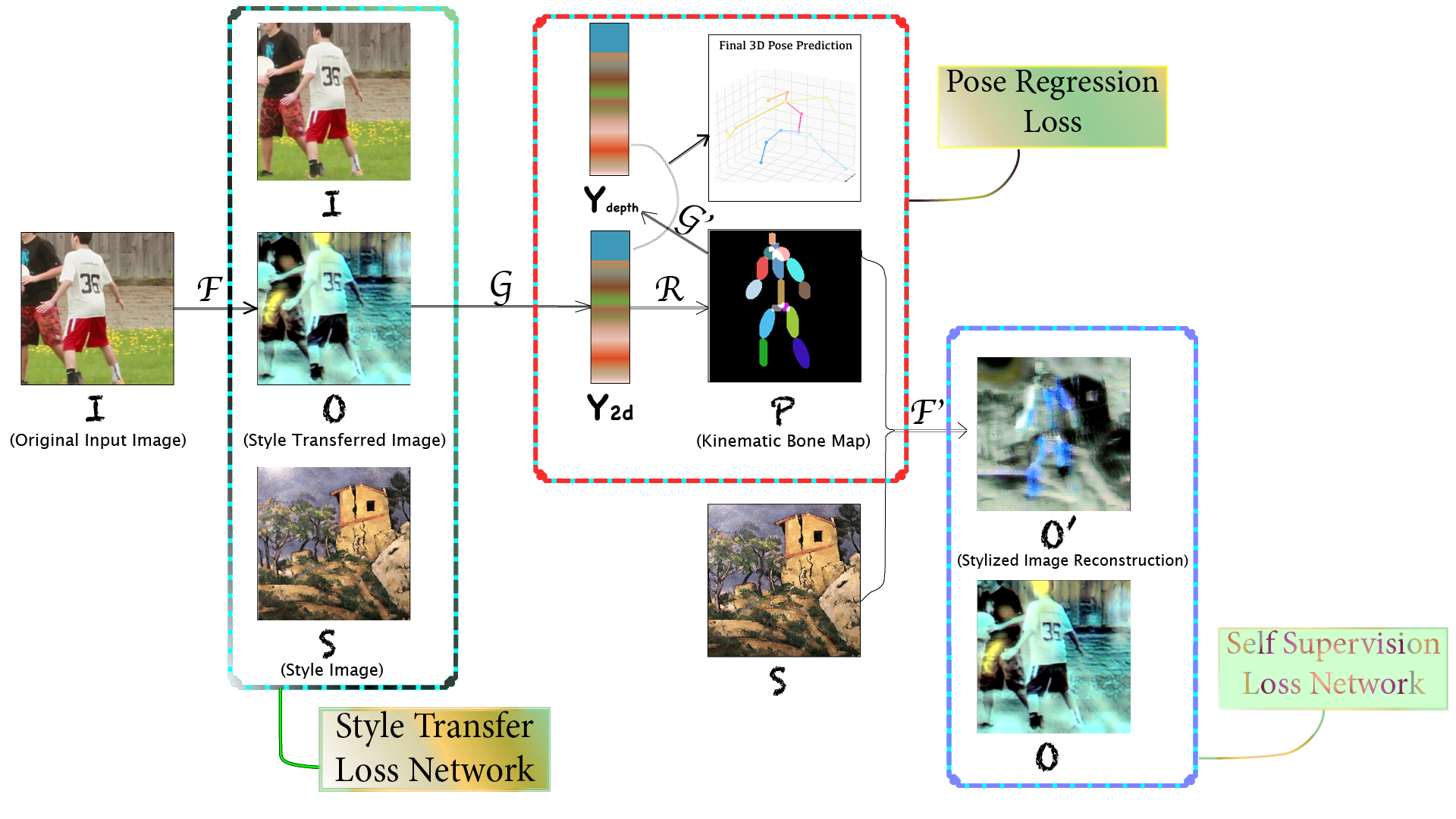

This is the project report for CSCI-GA.2271-001. We target human pose estimation in artistic images. For this goal, we design an end-to-end system that uses neural style transfer for pose regression. We collect a 277-style set for arbitrary style transfer and build an artistic 281-image test set. We directly run pose regression on the test set and show promising results. For pose regression, we propose a 2d-induced bone map from which pose is lifted. To help such a lifting, we additionally annotate the pseudo 3d labels of the full in-the-wild MPII dataset. Further, we append another style transfer as self supervision to improve 2d. We perform extensive ablation studies to analyze the introduced features. We also compare end-to-end with per-style training and allude to the tradeoff between style transfer and pose regression. Lastly, we generalize our model to the real-world human dataset and show its potentiality as a generic pose model. We explain the theoretical foundation in Appendix. We release code at https://github.com/strawberryfg/NAPA-NST-HPE, data, and video.

PDF Abstract

MPII

MPII