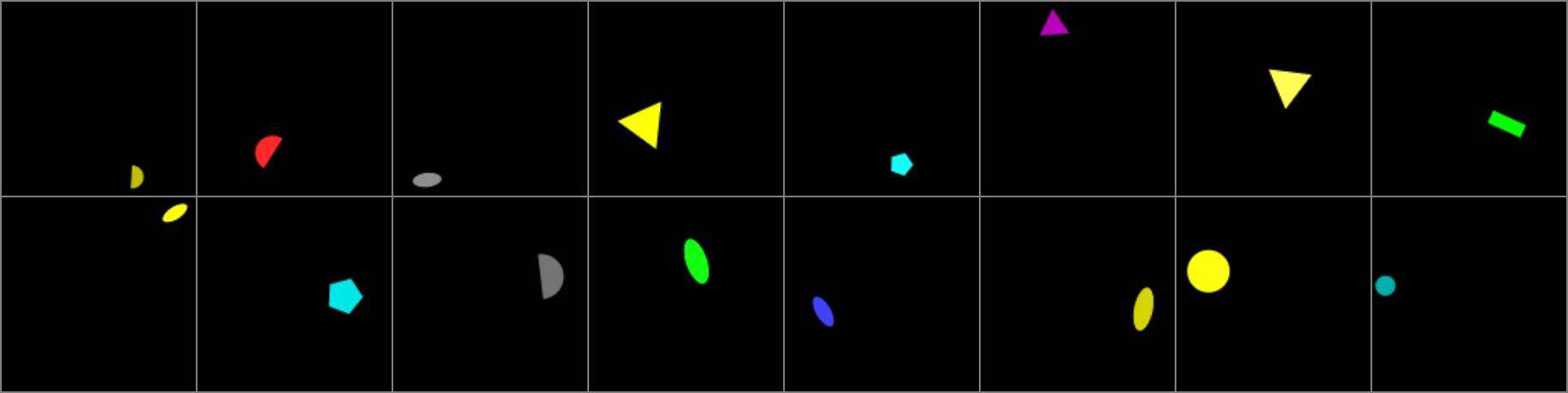

ShapeWorld - A new test methodology for multimodal language understanding

We introduce a novel framework for evaluating multimodal deep learning models with respect to their language understanding and generalization abilities. In this approach, artificial data is automatically generated according to the experimenter's specifications. The content of the data, both during training and evaluation, can be controlled in detail, which enables tasks to be created that require true generalization abilities, in particular the combination of previously introduced concepts in novel ways. We demonstrate the potential of our methodology by evaluating various visual question answering models on four different tasks, and show how our framework gives us detailed insights into their capabilities and limitations. By open-sourcing our framework, we hope to stimulate progress in the field of multimodal language understanding.

PDF AbstractDatasets

Introduced in the Paper:

ShapeWorld

ShapeWorld

Used in the Paper:

Visual Question Answering

Visual Question Answering

Arcade Learning Environment

Arcade Learning Environment

bAbI

bAbI

SHAPES

SHAPES