Unpaired Depth Super-Resolution in the Wild

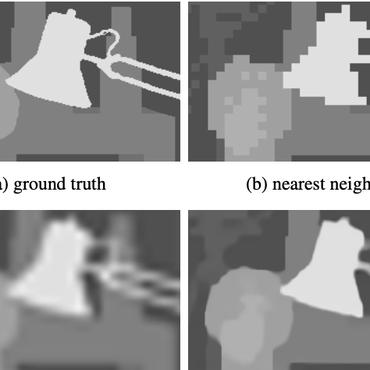

Depth maps captured with commodity sensors are often of low quality and resolution; these maps need to be enhanced to be used in many applications. State-of-the-art data-driven methods of depth map super-resolution rely on registered pairs of low- and high-resolution depth maps of the same scenes. Acquisition of real-world paired data requires specialized setups. Another alternative, generating low-resolution maps from high-resolution maps by subsampling, adding noise and other artificial degradation methods, does not fully capture the characteristics of real-world low-resolution images. As a consequence, supervised learning methods trained on such artificial paired data may not perform well on real-world low-resolution inputs. We consider an approach to depth super-resolution based on learning from unpaired data. While many techniques for unpaired image-to-image translation have been proposed, most fail to deliver effective hole-filling or reconstruct accurate surfaces using depth maps. We propose an unpaired learning method for depth super-resolution, which is based on a learnable degradation model, enhancement component and surface normal estimates as features to produce more accurate depth maps. We propose a benchmark for unpaired depth SR and demonstrate that our method outperforms existing unpaired methods and performs on par with paired.

PDF Abstract

ScanNet

ScanNet

InteriorNet

InteriorNet