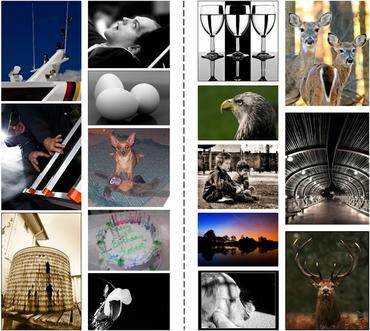

Image Quality Assessment

220 papers with code • 3 benchmarks • 12 datasets

Libraries

Use these libraries to find Image Quality Assessment models and implementationsDatasets

Subtasks

Latest papers with no code

Adaptive Mixed-Scale Feature Fusion Network for Blind AI-Generated Image Quality Assessment

Specifically, inspired by the characteristics of the human visual system and motivated by the observation that "visual quality" and "authenticity" are characterized by both local and global aspects, AMFF-Net scales the image up and down and takes the scaled images and original-sized image as the inputs to obtain multi-scale features.

Multi-Modal Prompt Learning on Blind Image Quality Assessment

Image Quality Assessment (IQA) models benefit significantly from semantic information, which allows them to treat different types of objects distinctly.

CrossScore: Towards Multi-View Image Evaluation and Scoring

We introduce a novel cross-reference image quality assessment method that effectively fills the gap in the image assessment landscape, complementing the array of established evaluation schemes -- ranging from full-reference metrics like SSIM, no-reference metrics such as NIQE, to general-reference metrics including FID, and Multi-modal-reference metrics, e. g., CLIPScore.

PCQA: A Strong Baseline for AIGC Quality Assessment Based on Prompt Condition

It is essential to build an effective quality assessment framework to provide a quantifiable evaluation of different images or videos based on the AIGC technologies.

Beyond Score Changes: Adversarial Attack on No-Reference Image Quality Assessment from Two Perspectives

Meanwhile, it is important to note that the correlation, like ranking correlation, plays a significant role in NR-IQA tasks.

AIGIQA-20K: A Large Database for AI-Generated Image Quality Assessment

With the rapid advancements in AI-Generated Content (AIGC), AI-Generated Images (AIGIs) have been widely applied in entertainment, education, and social media.

AIGCOIQA2024: Perceptual Quality Assessment of AI Generated Omnidirectional Images

Finally, we conduct a benchmark experiment to evaluate the performance of state-of-the-art IQA models on our database.

Bringing Textual Prompt to AI-Generated Image Quality Assessment

To solve this problem, we introduce IP-IQA (AGIs Quality Assessment via Image and Prompt), a multimodal framework for AGIQA via corresponding image and prompt incorporation.

An edge detection-based deep learning approach for tear meniscus height measurement

For improved segmentation of the pupil and tear meniscus areas, the convolutional neural network Inceptionv3 was first implemented as an image quality assessment model, effectively identifying higher-quality images with an accuracy of 98. 224%.

Diffusion-based Iterative Counterfactual Explanations for Fetal Ultrasound Image Quality Assessment

Obstetric ultrasound image quality is crucial for accurate diagnosis and monitoring of fetal health.

CSIQ

CSIQ

KonIQ-10k

KonIQ-10k

MSU NR VQA Database

MSU NR VQA Database

MSU FR VQA Database

MSU FR VQA Database

TID2013

TID2013

PIQ23

PIQ23

Hephaestus

Hephaestus