Skip Connection Blocks

Skip Connection Blocks

Ghost Bottleneck

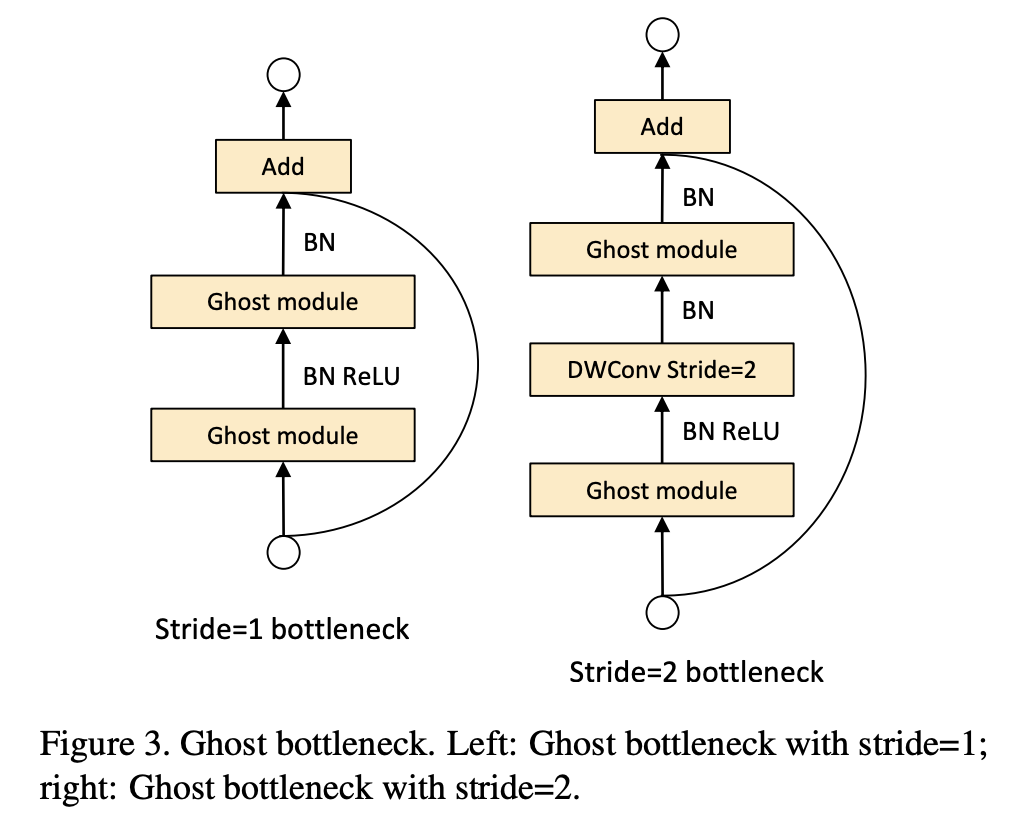

Introduced by Han et al. in GhostNet: More Features from Cheap OperationsA Ghost BottleNeck is a skip connection block, similar to the basic residual block in ResNet in which several convolutional layers and shortcuts are integrated, but stacks Ghost Modules instead (two stacked Ghost modules). It was proposed as part of the GhostNet CNN architecture.

The first Ghost module acts as an expansion layer increasing the number of channels. The ratio between the number of the output channels and that of the input is referred to as the expansion ratio. The second Ghost module reduces the number of channels to match the shortcut path. Then the shortcut is connected between the inputs and the outputs of these two Ghost modules. The batch normalization (BN) and ReLU nonlinearity are applied after each layer, except that ReLU is not used after the second Ghost module as suggested by MobileNetV2. The Ghost bottleneck described above is for stride=1. As for the case where stride=2, the shortcut path is implemented by a downsampling layer and a depthwise convolution with stride=2 is inserted between the two Ghost modules. In practice, the primary convolution in Ghost module here is pointwise convolution for its efficiency.

Source: GhostNet: More Features from Cheap OperationsPapers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Image Classification | 8 | 26.67% |

| Object Detection | 2 | 6.67% |

| Semantic Segmentation | 2 | 6.67% |

| Acoustic Scene Classification | 1 | 3.33% |

| Scene Classification | 1 | 3.33% |

| Speech Separation | 1 | 3.33% |

| Time Series Analysis | 1 | 3.33% |

| Management | 1 | 3.33% |

| Model Compression | 1 | 3.33% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

Batch Normalization

Batch Normalization

|

Normalization | |

Depthwise Separable Convolution

Depthwise Separable Convolution

|

Convolutions | |

Ghost Module

Ghost Module

|

Image Model Blocks | |

ReLU

ReLU

|

Activation Functions | |

Residual Connection

Residual Connection

|

Skip Connections |