Adapter is All You Need for Tuning Visual Tasks

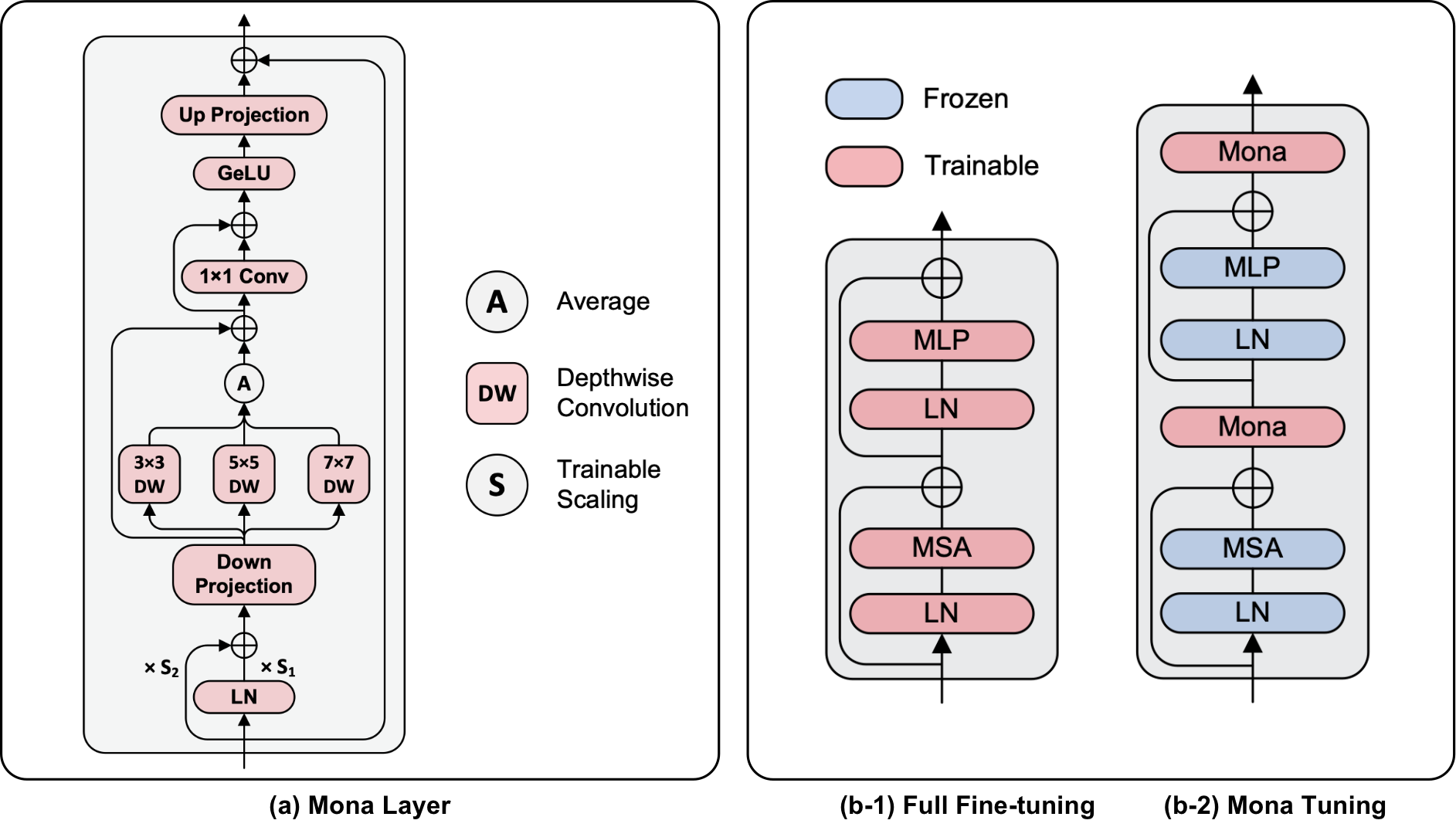

Pre-training & fine-tuning can enhance the transferring efficiency and performance in visual tasks. Recent delta-tuning methods provide more options for visual classification tasks. Despite their success, existing visual delta-tuning art fails to exceed the upper limit of full fine-tuning on challenging tasks like instance segmentation and semantic segmentation. To find a competitive alternative to full fine-tuning, we propose the Multi-cognitive Visual Adapter (Mona) tuning, a novel adapter-based tuning method. First, we introduce multiple vision-friendly filters into the adapter to enhance its ability to process visual signals, while previous methods mainly rely on language-friendly linear filters. Second, we add the scaled normalization layer in the adapter to regulate the distribution of input features for visual filters. To fully demonstrate the practicality and generality of Mona, we conduct experiments on multiple representative visual tasks, including instance segmentation on COCO, semantic segmentation on ADE20K, object detection on Pascal VOC, and image classification on several common datasets. Exciting results illustrate that Mona surpasses full fine-tuning on all these tasks and is the only delta-tuning method outperforming full fine-tuning on instance segmentation and semantic segmentation tasks. For example, Mona achieves a 1% performance gain on the COCO dataset compared to full fine-tuning. Comprehensive results suggest that Mona-tuning is more suitable for retaining and utilizing the capabilities of pre-trained models than full fine-tuning. The code will be released at https://github.com/Leiyi-Hu/mona.

PDF Abstract

ImageNet

ImageNet

MS COCO

MS COCO

Oxford 102 Flower

Oxford 102 Flower

ADE20K

ADE20K

Oxford-IIIT Pet Dataset

Oxford-IIIT Pet Dataset