End-to-End Learnable Geometric Vision by Backpropagating PnP Optimization

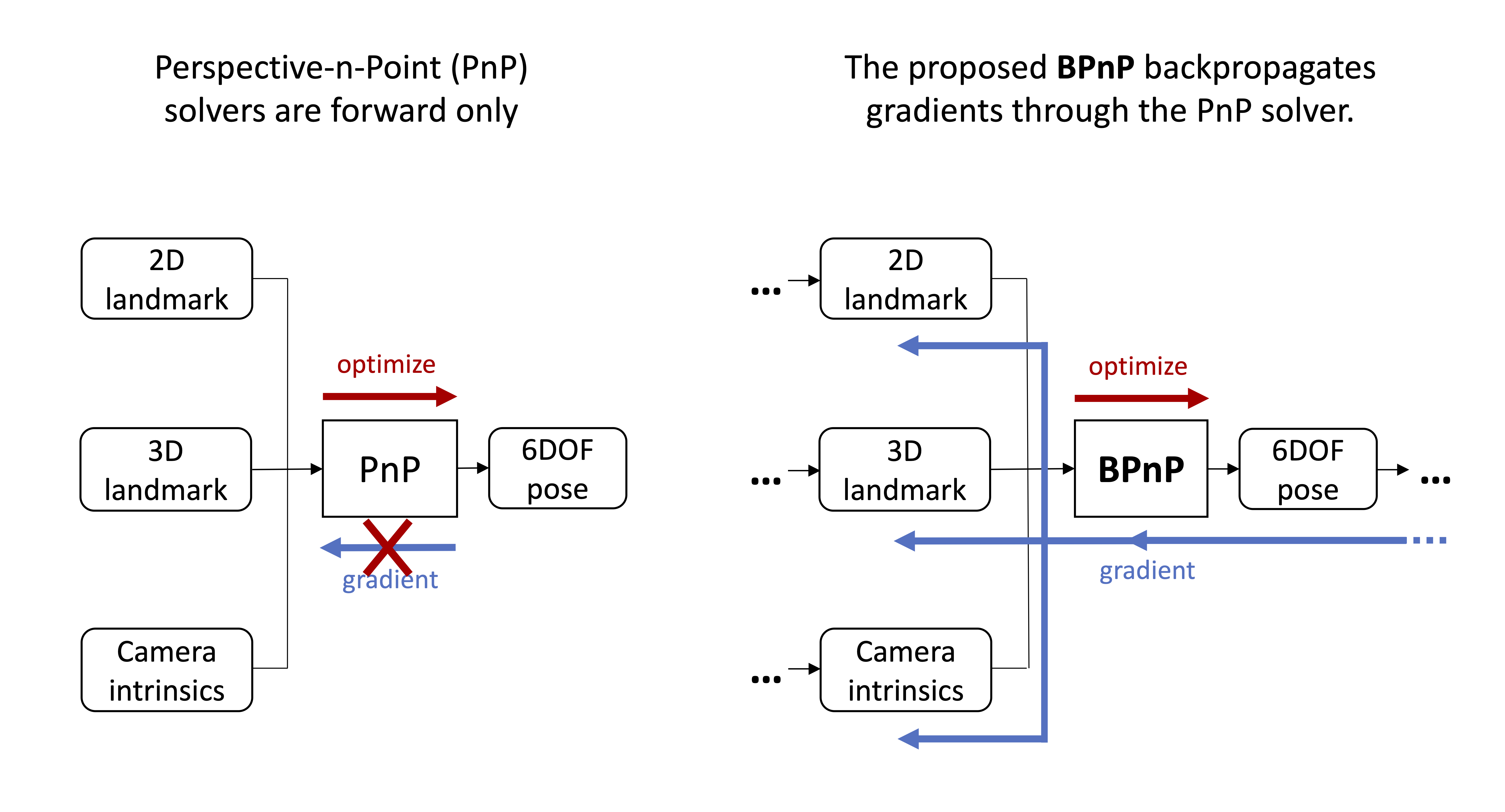

Deep networks excel in learning patterns from large amounts of data. On the other hand, many geometric vision tasks are specified as optimization problems. To seamlessly combine deep learning and geometric vision, it is vital to perform learning and geometric optimization end-to-end. Towards this aim, we present BPnP, a novel network module that backpropagates gradients through a Perspective-n-Points (PnP) solver to guide parameter updates of a neural network. Based on implicit differentiation, we show that the gradients of a "self-contained" PnP solver can be derived accurately and efficiently, as if the optimizer block were a differentiable function. We validate BPnP by incorporating it in a deep model that can learn camera intrinsics, camera extrinsics (poses) and 3D structure from training datasets. Further, we develop an end-to-end trainable pipeline for object pose estimation, which achieves greater accuracy by combining feature-based heatmap losses with 2D-3D reprojection errors. Since our approach can be extended to other optimization problems, our work helps to pave the way to perform learnable geometric vision in a principled manner. Our PyTorch implementation of BPnP is available on http://github.com/BoChenYS/BPnP.

PDF Abstract CVPR 2020 PDF CVPR 2020 AbstractDatasets

Reproducibility Reports

We reproduce the claims of both papers by conducting several experiments in the UAVA dataset [3]. The integration of a differentiable geometric module within an keypointbased object pose estimation model improved its performance in metrics. We additionally verify that this is the case for other differentiable PnP implementations (i.e. EPnP). Further, our results indicate that indeed HigherHRNet improves keypoint localisation performance on small scale objects.

Results from the Paper

Ranked #1 on

6D Pose Estimation using RGB

on LineMOD

(Accuracy metric)

Ranked #1 on

6D Pose Estimation using RGB

on LineMOD

(Accuracy metric)