Deep Shape Matching

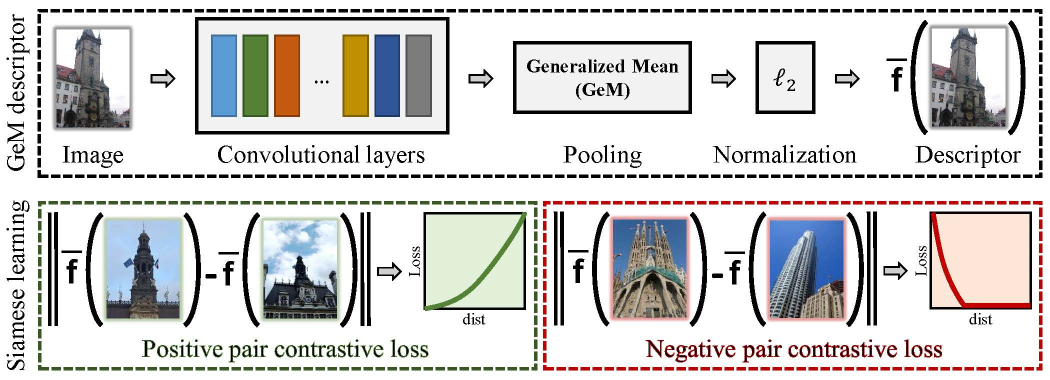

We cast shape matching as metric learning with convolutional networks. We break the end-to-end process of image representation into two parts. Firstly, well established efficient methods are chosen to turn the images into edge maps. Secondly, the network is trained with edge maps of landmark images, which are automatically obtained by a structure-from-motion pipeline. The learned representation is evaluated on a range of different tasks, providing improvements on challenging cases of domain generalization, generic sketch-based image retrieval or its fine-grained counterpart. In contrast to other methods that learn a different model per task, object category, or domain, we use the same network throughout all our experiments, achieving state-of-the-art results in multiple benchmarks.

PDF Abstract ECCV 2018 PDF ECCV 2018 AbstractResults from the Paper

Ranked #1 on

Sketch-Based Image Retrieval

on Chairs

(using extra training data)

Ranked #1 on

Sketch-Based Image Retrieval

on Chairs

(using extra training data)

ImageNet

ImageNet

PACS

PACS

Sketch

Sketch

Chairs

Chairs