Feature Control as Intrinsic Motivation for Hierarchical Reinforcement Learning

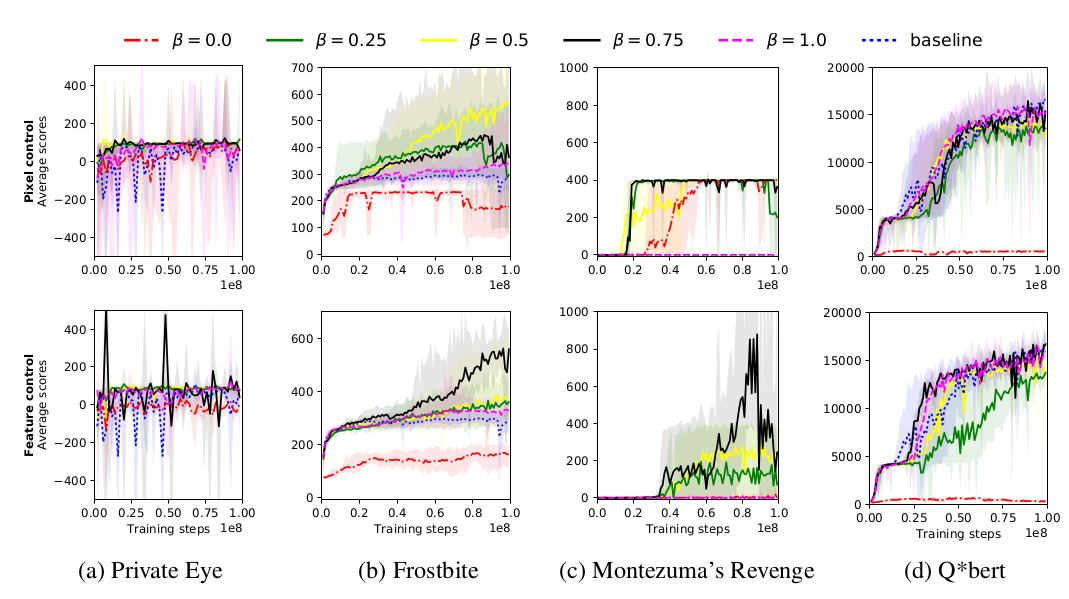

The problem of sparse rewards is one of the hardest challenges in contemporary reinforcement learning. Hierarchical reinforcement learning (HRL) tackles this problem by using a set of temporally-extended actions, or options, each of which has its own subgoal. These subgoals are normally handcrafted for specific tasks. Here, though, we introduce a generic class of subgoals with broad applicability in the visual domain. Underlying our approach (in common with work using "auxiliary tasks") is the hypothesis that the ability to control aspects of the environment is an inherently useful skill to have. We incorporate such subgoals in an end-to-end hierarchical reinforcement learning system and test two variants of our algorithm on a number of games from the Atari suite. We highlight the advantage of our approach in one of the hardest games -- Montezuma's revenge -- for which the ability to handle sparse rewards is key. Our agent learns several times faster than the current state-of-the-art HRL agent in this game, reaching a similar level of performance. UPDATE 22/11/17: We found that a standard A3C agent with a simple shaped reward, i.e. extrinsic reward + feature control intrinsic reward, has comparable performance to our agent in Montezuma Revenge. In light of the new experiments performed, the advantage of our HRL approach can be attributed more to its ability to learn useful features from intrinsic rewards rather than its ability to explore and reuse abstracted skills with hierarchical components. This has led us to a new conclusion about the result.

PDF Abstract