Grounded Language-Image Pre-training

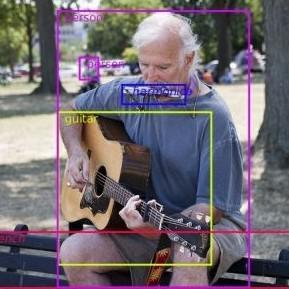

This paper presents a grounded language-image pre-training (GLIP) model for learning object-level, language-aware, and semantic-rich visual representations. GLIP unifies object detection and phrase grounding for pre-training. The unification brings two benefits: 1) it allows GLIP to learn from both detection and grounding data to improve both tasks and bootstrap a good grounding model; 2) GLIP can leverage massive image-text pairs by generating grounding boxes in a self-training fashion, making the learned representation semantic-rich. In our experiments, we pre-train GLIP on 27M grounding data, including 3M human-annotated and 24M web-crawled image-text pairs. The learned representations demonstrate strong zero-shot and few-shot transferability to various object-level recognition tasks. 1) When directly evaluated on COCO and LVIS (without seeing any images in COCO during pre-training), GLIP achieves 49.8 AP and 26.9 AP, respectively, surpassing many supervised baselines. 2) After fine-tuned on COCO, GLIP achieves 60.8 AP on val and 61.5 AP on test-dev, surpassing prior SoTA. 3) When transferred to 13 downstream object detection tasks, a 1-shot GLIP rivals with a fully-supervised Dynamic Head. Code is released at https://github.com/microsoft/GLIP.

PDF Abstract CVPR 2022 PDF CVPR 2022 AbstractCode

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Uses Extra Training Data |

Benchmark |

|---|---|---|---|---|---|---|---|

| Object Detection | COCO minival | GLIP (Swin-L, multi-scale) | box AP | 60.8 | # 17 | ||

| Object Detection | COCO-O | GLIP-T (Swin-T) | Average mAP | 29.1 | # 21 | ||

| Effective Robustness | 8.11 | # 12 | |||||

| Object Detection | COCO-O | GLIP-L (Swin-L) | Average mAP | 48.0 | # 3 | ||

| Effective Robustness | 24.89 | # 2 | |||||

| Object Detection | COCO test-dev | GLIP (Swin-L, multi-scale) | box mAP | 61.5 | # 21 | ||

| AP50 | 79.5 | # 4 | |||||

| AP75 | 67.7 | # 4 | |||||

| APS | 45.3 | # 4 | |||||

| APM | 64.9 | # 4 | |||||

| APL | 75.0 | # 4 | |||||

| Described Object Detection | Description Detection Dataset | GLIP-T | Intra-scenario FULL mAP | 19.1 | # 4 | ||

| Intra-scenario PRES mAP | 18.3 | # 5 | |||||

| Intra-scenario ABS mAP | 21.5 | # 3 | |||||

| Phrase Grounding | Flickr30k Entities Test | GLIP | R@1 | 87.1 | # 3 | ||

| R@10 | 98.1 | # 1 | |||||

| R@5 | 96.9 | # 1 | |||||

| Zero-Shot Object Detection | LVIS v1.0 minival | GLIP-L | AP | 37.3 | # 3 | ||

| Zero-Shot Object Detection | LVIS v1.0 val | GLIP-L | AP | 26.9 | # 3 | ||

| Few-Shot Object Detection | ODinW-13 | GLIP-T | Average Score | 50.7 | # 2 | ||

| Few-Shot Object Detection | ODinW-35 | GLIP-T | Average Score | 38.9 | # 2 | ||

| 2D Object Detection | RF100 | GLIP | Average mAP | 0.112 | # 1 |

MS COCO

MS COCO

Visual Genome

Visual Genome

LVIS

LVIS

Objects365

Objects365

Flickr30K Entities

Flickr30K Entities

COCO-O

COCO-O

Description Detection Dataset

Description Detection Dataset

RF100

RF100